June 17, 2025

Hello. I'm excited to be here today to talk to you about software in the era of AI. I'm told that many of you are students — bachelors, masters, PhD, and so on — and you're about to enter the industry. I think it's an extremely unique and very interesting time to enter the industry right now.

I think fundamentally the reason for that is that software is changing again. I say "again" because I actually gave this talk already. But the problem is that software keeps changing. So I actually have a lot of material to create new talks, and I think it's changing quite fundamentally.

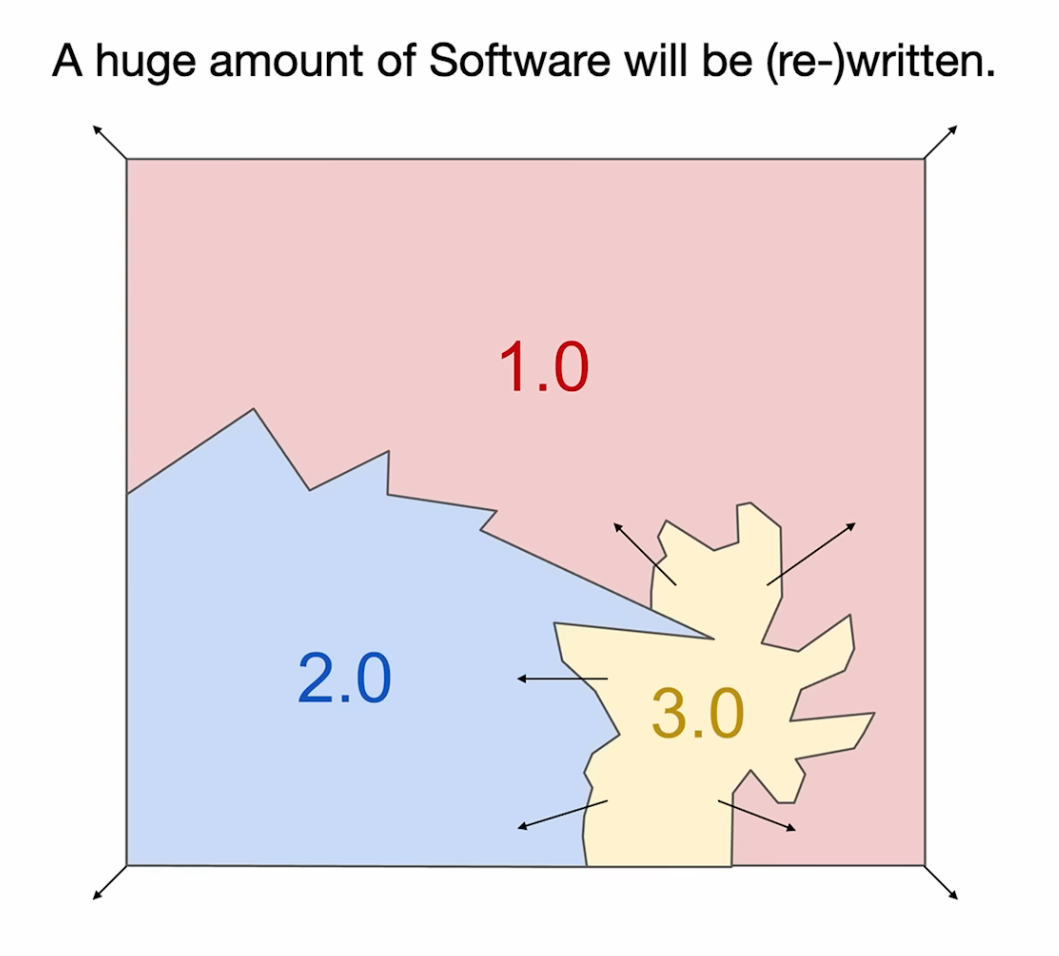

I think roughly speaking, software has not changed much on such a fundamental level for 70 years. Then it's changed about twice quite rapidly in the last few years. So there's just a huge amount of work to do, a huge amount of software to write and rewrite.

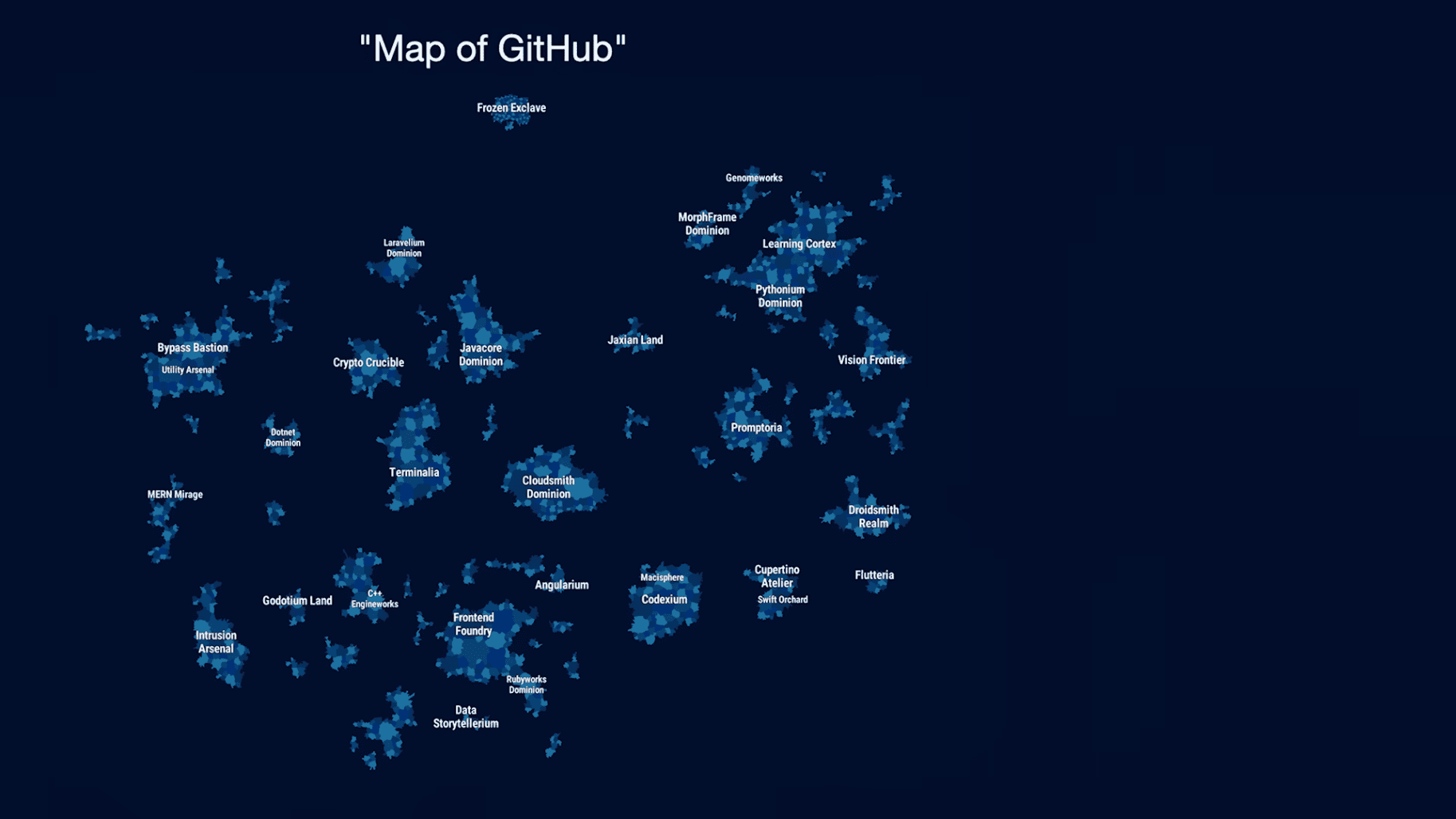

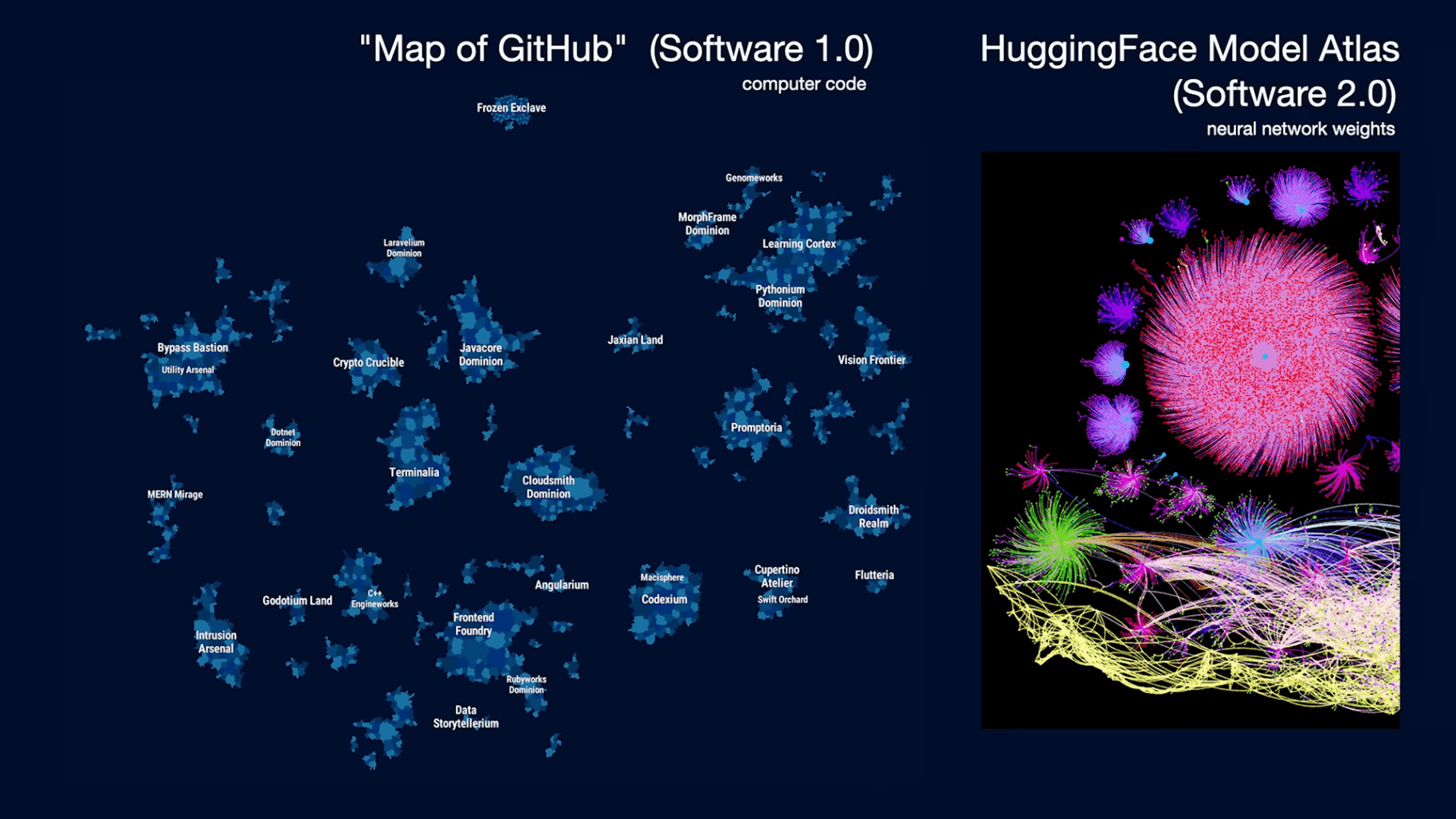

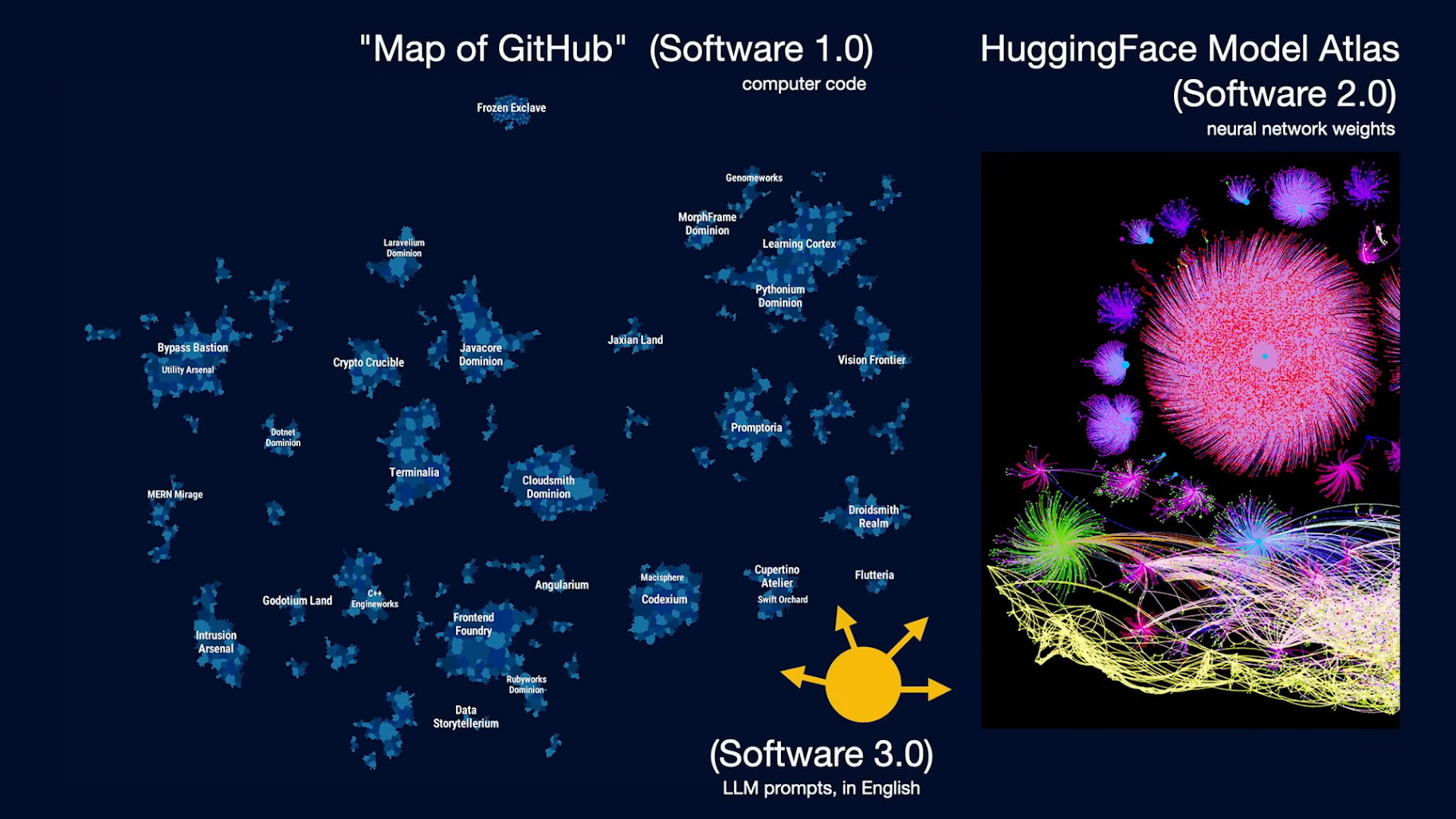

Let's take a look at the realm of software. If we think of this as the map of software, this is a really cool tool called "Map of GitHub." This is all the software that's written. These are instructions to the computer for carrying out tasks in the digital space.

If you zoom in here, these are all different kinds of repositories, and this is all the code that has been written.

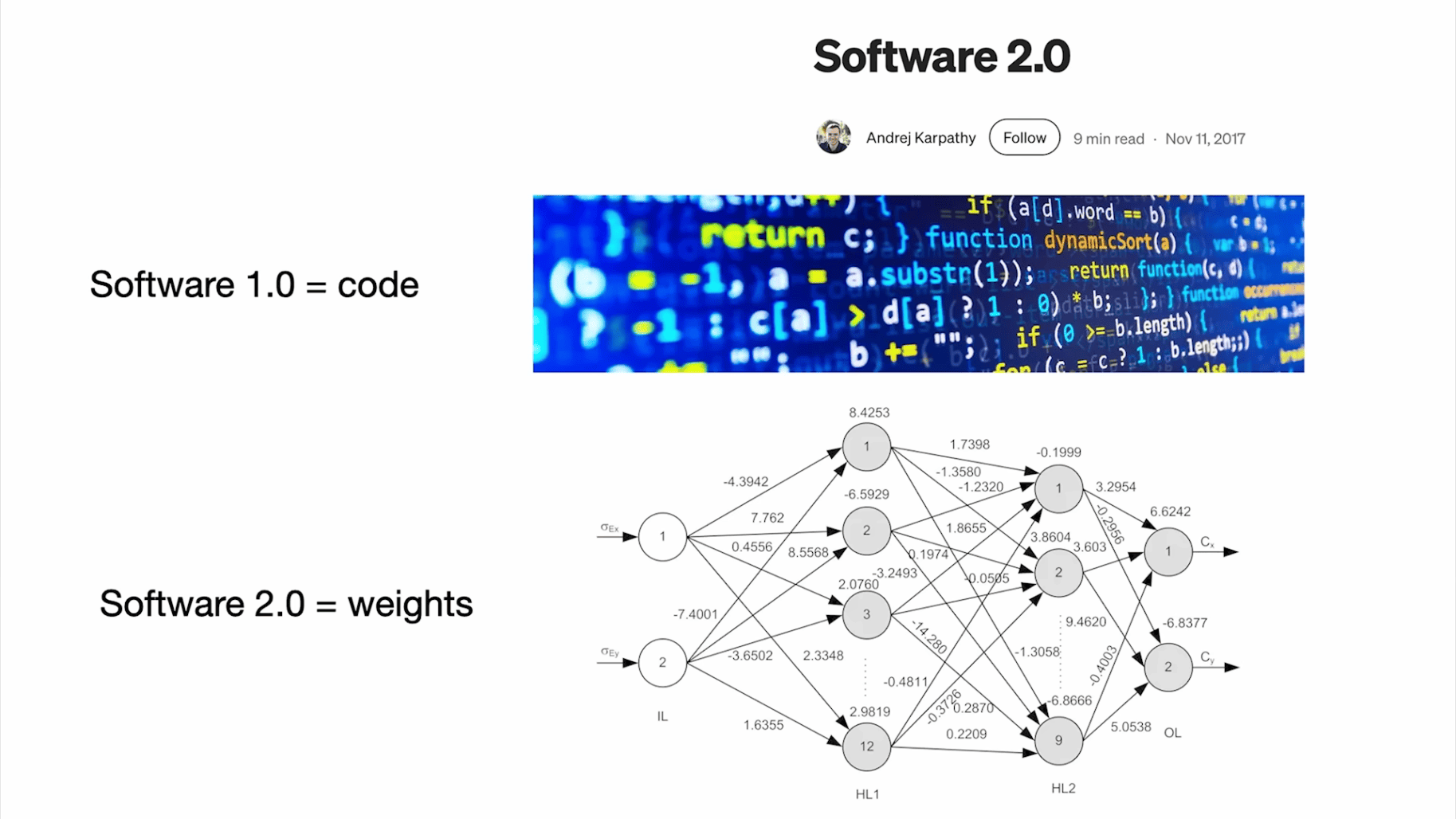

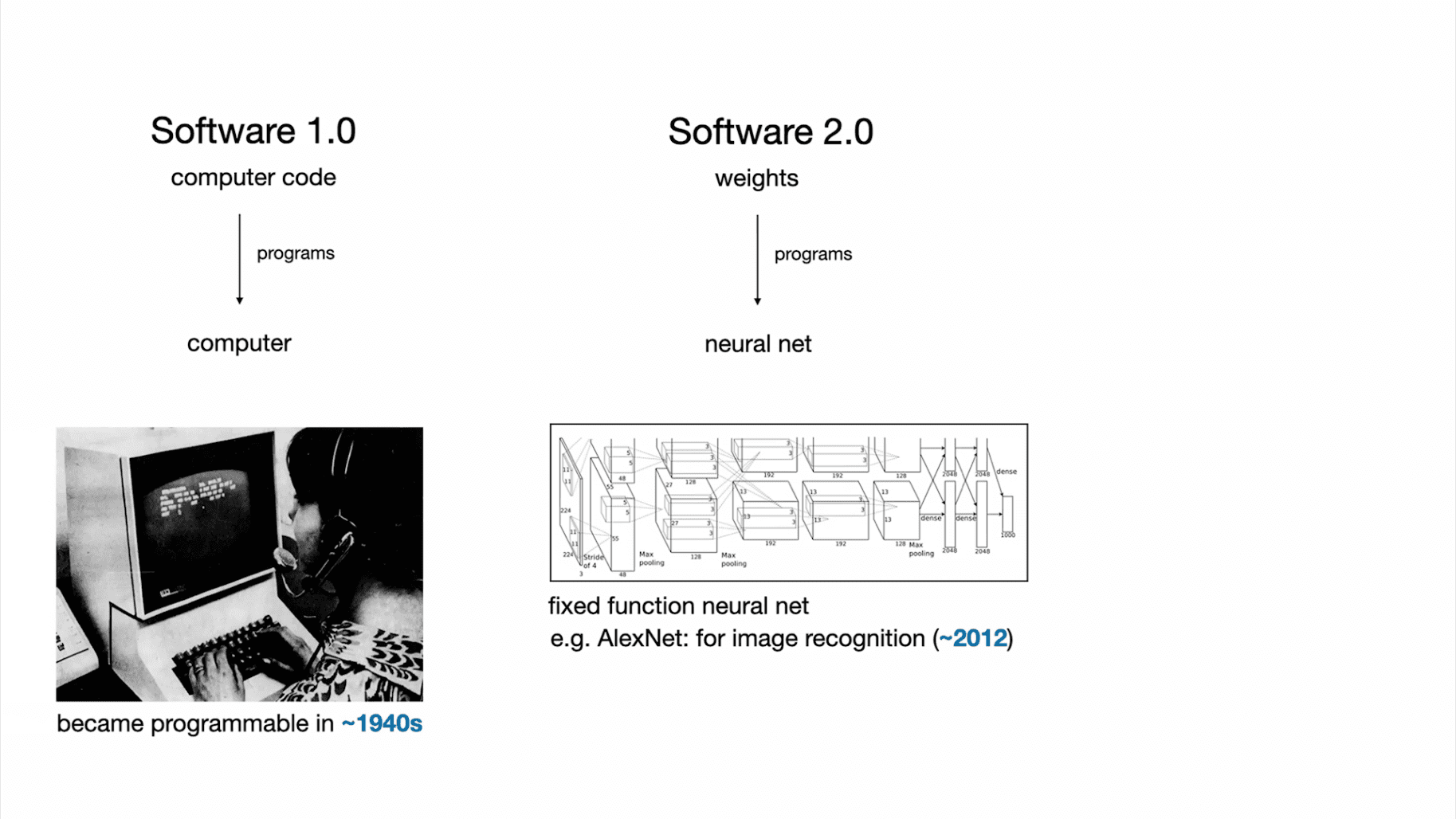

A few years ago, I observed that software was changing, and there was a new type of software around, and I called this "Software 2.0" at the time. The idea here was that Software 1.0 is the code you write for the computer. Software 2.0 now are neural networks and, in particular, the weights of a neural network. You're not writing this code directly; you are more tuning the datasets, and then you're running an optimizer to create the parameters of this neural net.

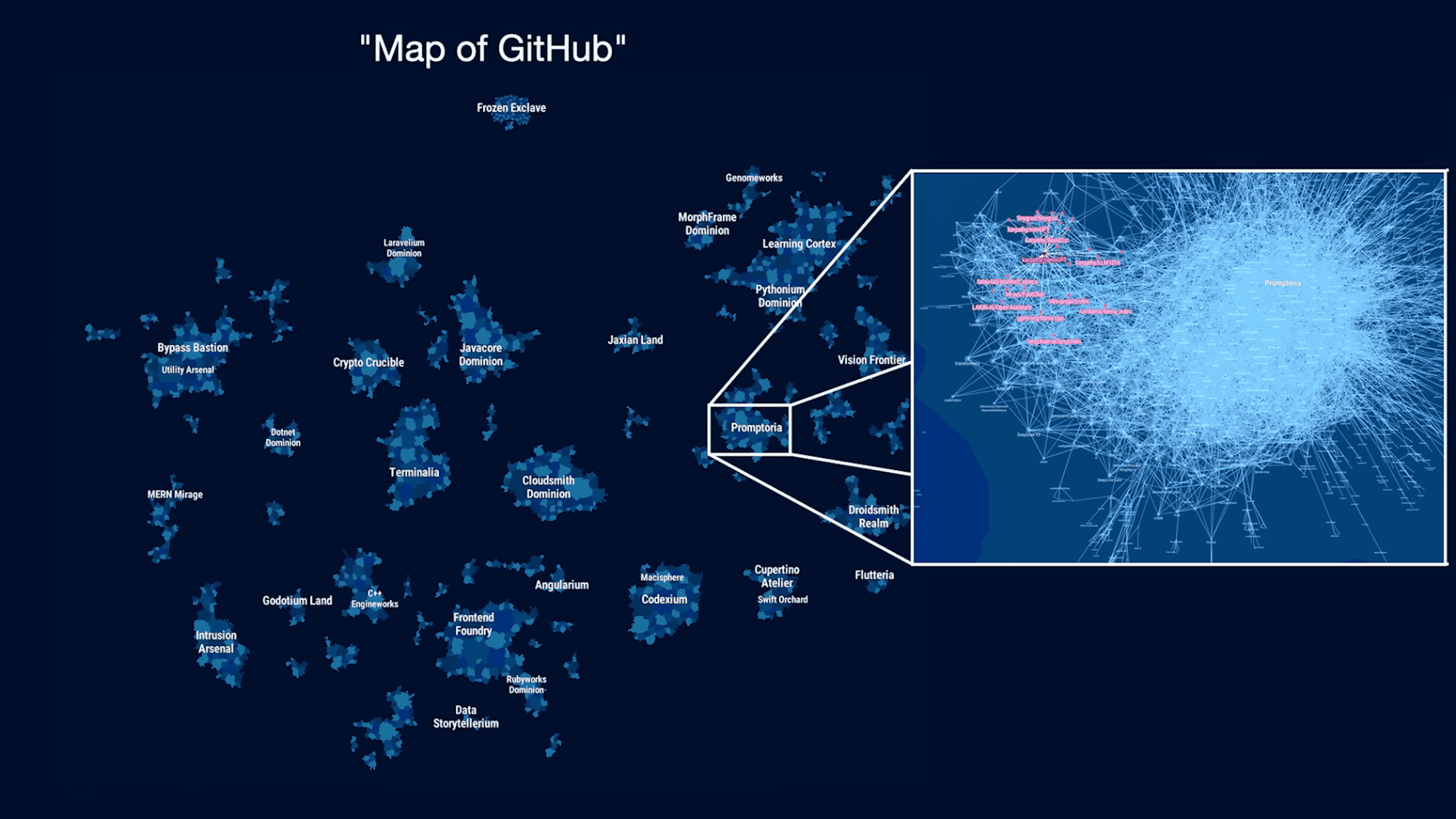

At the time, neural nets were seen as just a different kind of classifier, like a decision tree or something like that. I think this framing was a lot more appropriate. Now, what we have is an equivalent of GitHub in the realm of Software 2.0. I think Hugging Face is equivalent of GitHub in Software 2.0. There's also Model Atlas, and you can visualize all the code written there.

In case you're curious, by the way, the giant circle, the point in the middle, these are the parameters of Flux, the image generator. So anytime someone tunes LoRa on top of a Flux model, you create a git commit in this space, and you create a different kind of image generator.

So what we have is:

Here's an example of AlexNet image recognizer neural network.

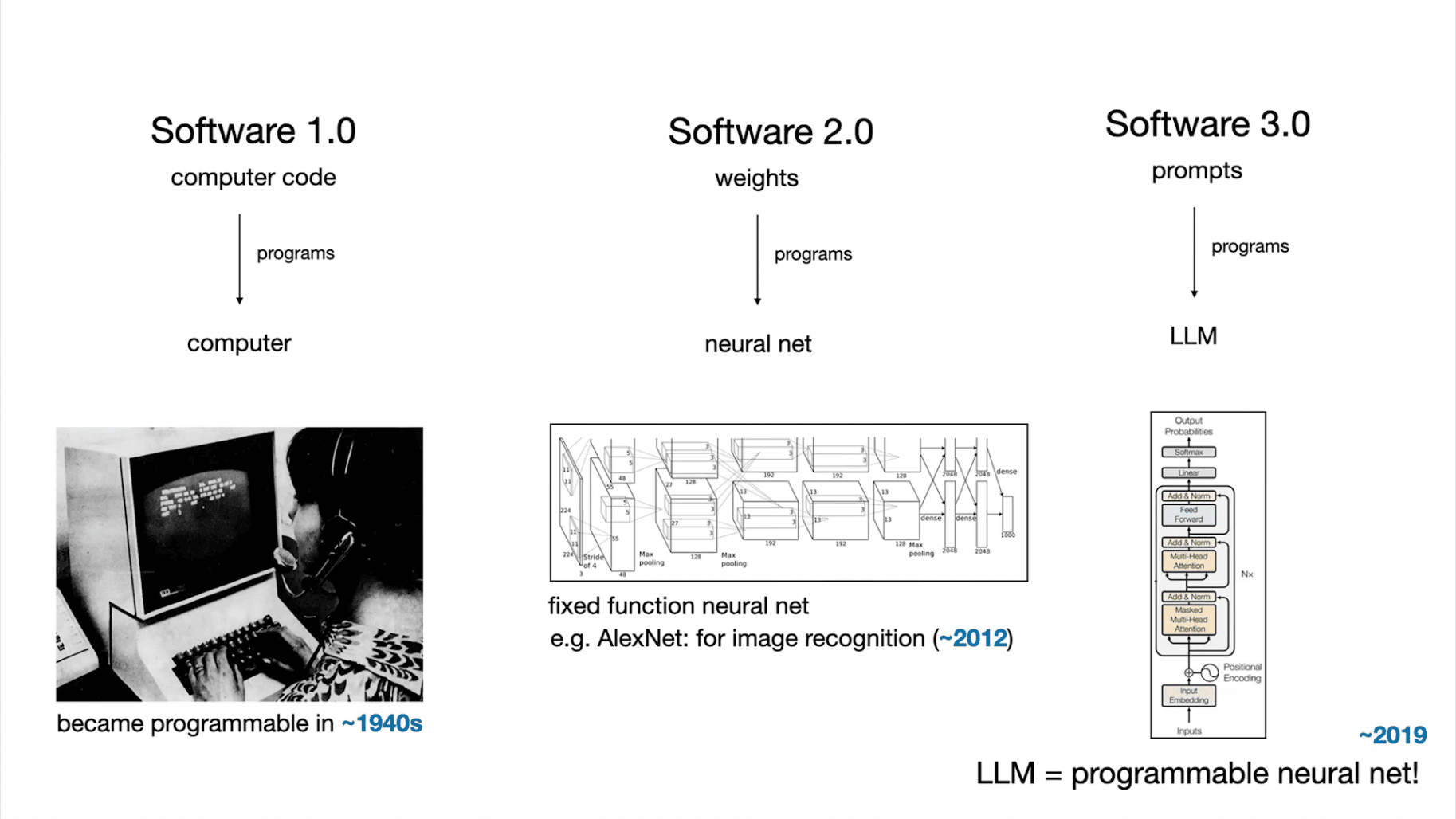

So far, all of the neural networks that we've been familiar with until recently were fixed-function computers — image to categories or something like that. I think what's changed, and is a quite fundamental change, is that neural networks became programmable with large language models.

I see this as quite new, unique. It's a new kind of computer, so in my mind, it's worth giving it a new designation of Software 3.0. Your prompts are now programs that program the LLM. Remarkably, these prompts are written in English. So it's a very interesting programming language.

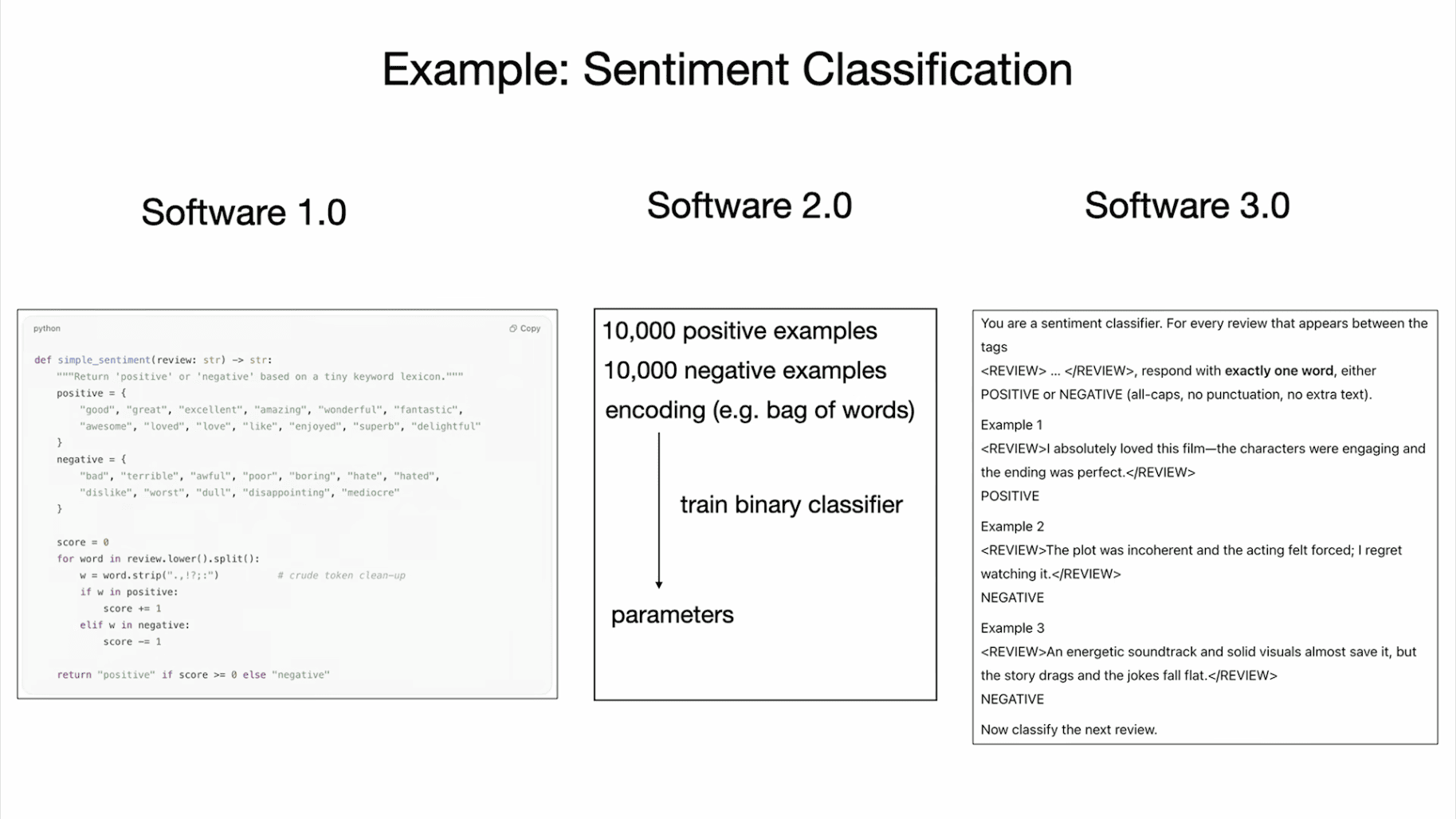

To summarize the difference: if you're doing sentiment classification, for example, you can imagine writing some amount of Python to do sentiment classification, or you can train a neural net, or you can prompt a large language model. Here this is a few short prompts, and you can imagine changing it and programming the computer in a slightly different way.

So we have Software 1.0, Software 2.0, and I think we're seeing — maybe you've seen — a lot of GitHub code is not just code anymore. There's a bunch of English interspersed with code, so I think there's a growing category of new kind of code.

Not only is it a new programming paradigm, it's also remarkable to me that it's in our native language of English. When this blew my mind a few years ago now, I tweeted this, and I think it captured the attention of a lot of people, and this is my currently pinned tweet: remarkably we're now programming computers in English.

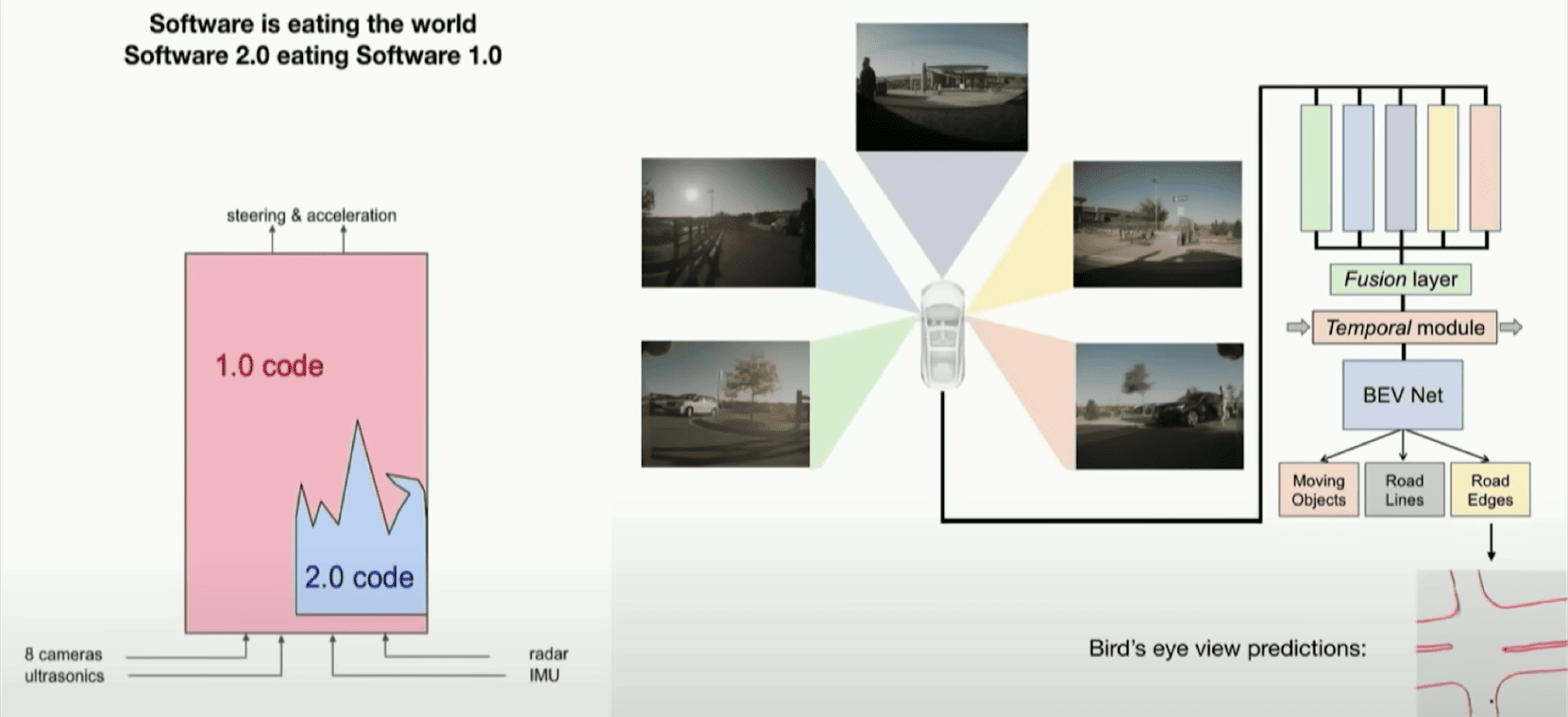

When I was at Tesla, we were working on the autopilot, and we were trying to get the car to drive, and I showed this slide at the time where you can imagine that the inputs to the car are on the bottom, and they're going through a software stack to produce the steering and acceleration.

I made the observation at the time that there was a ton of C++ code around in the autopilot, which was the Software 1.0 code, and then there was some neural nets in there doing image recognition. I observed that over time, as we made the autopilot better, the neural network grew in capability and size, and in addition to that, all the C++ code was being deleted and a lot of the capabilities and functionality that was originally written in 1.0 was migrated to 2.0.

As an example, a lot of the stitching up of information across images from the different cameras and across time was done by a neural network, and we were able to delete a lot of code. So the Software 2.0 stack quite literally ate through the software stack of the autopilot.

I thought this was really remarkable at the time, and I think we're seeing the same thing again where we have a new kind of software, and it's eating through the stack. We have three completely different programming paradigms, and I think if you're entering the industry, it's a very good idea to be fluent in all of them because they all have slight pros and cons, and you may want to program some functionality in 1.0 or 2.0 or 3.0. Are you going to train a neural net? Are you going to just prompt an LLM? Should this be a piece of code that's explicit, etc.? So we all have to make these decisions and actually potentially fluidly transition between these paradigms.

What I wanted to get into now is, first, I want to talk about LLMs and how to think of this new paradigm and the ecosystem and what that looks like. What is this new computer? What does it look like, and what does the ecosystem look like?

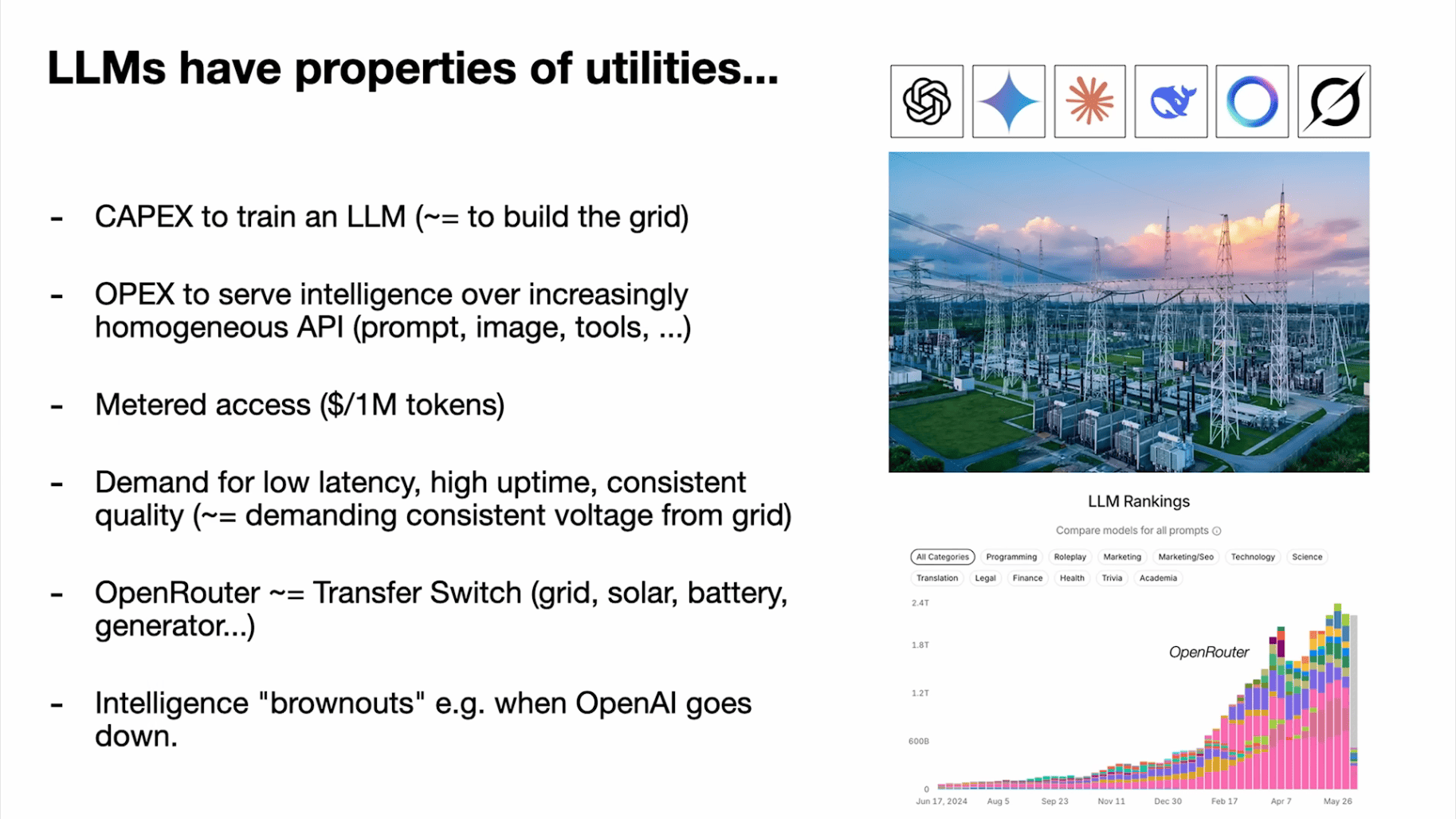

I was struck by this quote from Andrew Ng actually, many years ago now, and I think Andrew is going to be speaking right after me. He said at the time, "AI is the new electricity," and I do think that it captures something very interesting in that LLMs certainly feel like they have properties of utilities right now.

AI is the new electricity

- Andrew Ng

So LLM labs like OpenAI, Gemini, Anthropic, etc., they spend CAPEX to train the LLMs, and this is equivalent to building out a grid, and then there's OPEX to serve that intelligence over APIs to all of us, and this is done through metered access where we pay per million tokens or something like that.

We have a lot of demands that are very utility-like demands out of this API: we demand low latency, high uptime, consistent quality, etc. In electricity, you would have a transfer switch. So you can transfer your electricity source from grid and solar or battery or generator. In LLM, we have maybe OpenRouter and easily switch between the different types of LLMs that exist.

Because the LLMs are software, they don't compete for physical space. So it's okay to have six electricity providers, and you can switch between them, right? Because they don't compete in such a direct way.

What's also fascinating, and we saw this in the last few days actually, a lot of the LLMs went down, and people were stuck and unable to work. I think it's fascinating to me that when the state-of-the-art LLMs go down, it's actually like an intelligence brownout in the world. It's like when the voltage is unreliable in the grid, and the planet just gets dumber. The more reliance we have on these models, which already is really dramatic and I think will continue to grow.

But LLMs don't only have properties of utilities. I think it's also fair to say that they have some properties of fabs. The reason for this is that the CAPEX required for building LLMs is actually quite large. It's not just building some power station or something like that, right? You're investing a huge amount of money, and I think the tech tree for the technology is growing quite rapidly.

So we're in a world where we have deep tech trees, research and development secrets that are centralizing inside the LLM labs. But I think the analogy muddies a little bit also because, as I mentioned, this is software, and software is a bit less defensible because it is so malleable. I think it's just an interesting thing to think about potentially.

There are many analogies you can make: like a 4-nanometer process node maybe is something like a cluster with certain max FLOPS. You can think about when you're using Nvidia GPUs and you're only doing the software and you're not doing the hardware, that's like the fabless model. But if you're actually also building your own hardware and you're training on TPUs, if you're Google, that's like the Intel model where you own your fab.

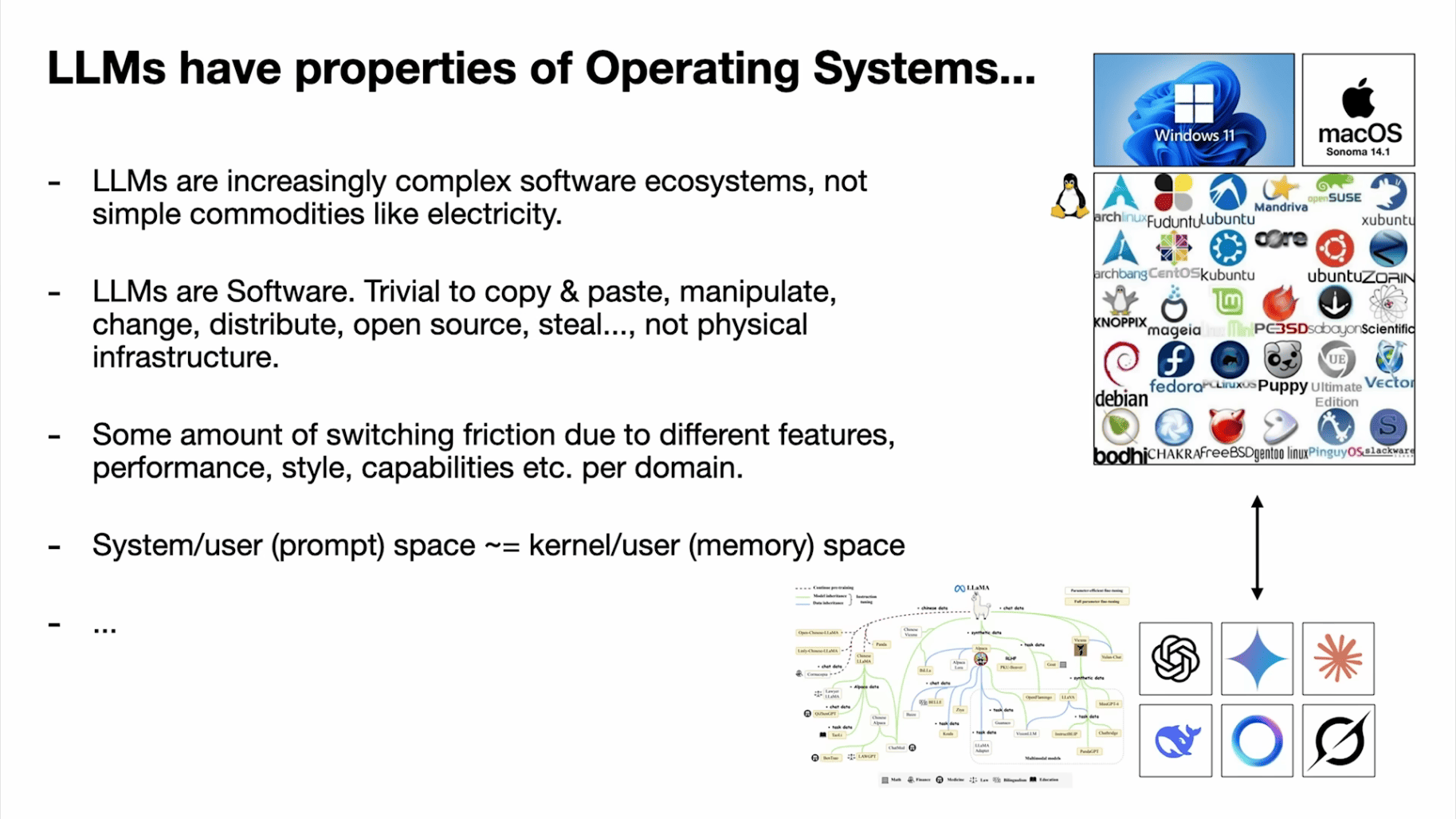

So I think there are some analogies here that make sense. But actually, I think the analogy that makes the most sense perhaps is that in my mind, LLMs have very strong analogies to operating systems. In that this is not just electricity or water. It's not something that comes out of the tap as a commodity. These are now increasingly complex software ecosystems, right? So they're not just simple commodities like electricity.

It's interesting to me that the ecosystem is shaping in a very similar way where you have a few closed-source providers like Windows or macOS, and then you have an open-source alternative like Linux. And I think for LLMs as well, we have a few competing closed-source providers, and then maybe the Llama ecosystem is currently a close approximation to something that may grow into something like Linux.

Again, I think it's still very early because these are just simple LLMs, but we're starting to see that these are going to get a lot more complicated. It's not just about the LLM itself. It's about all the tool use and the multimodalities and how all of that works.

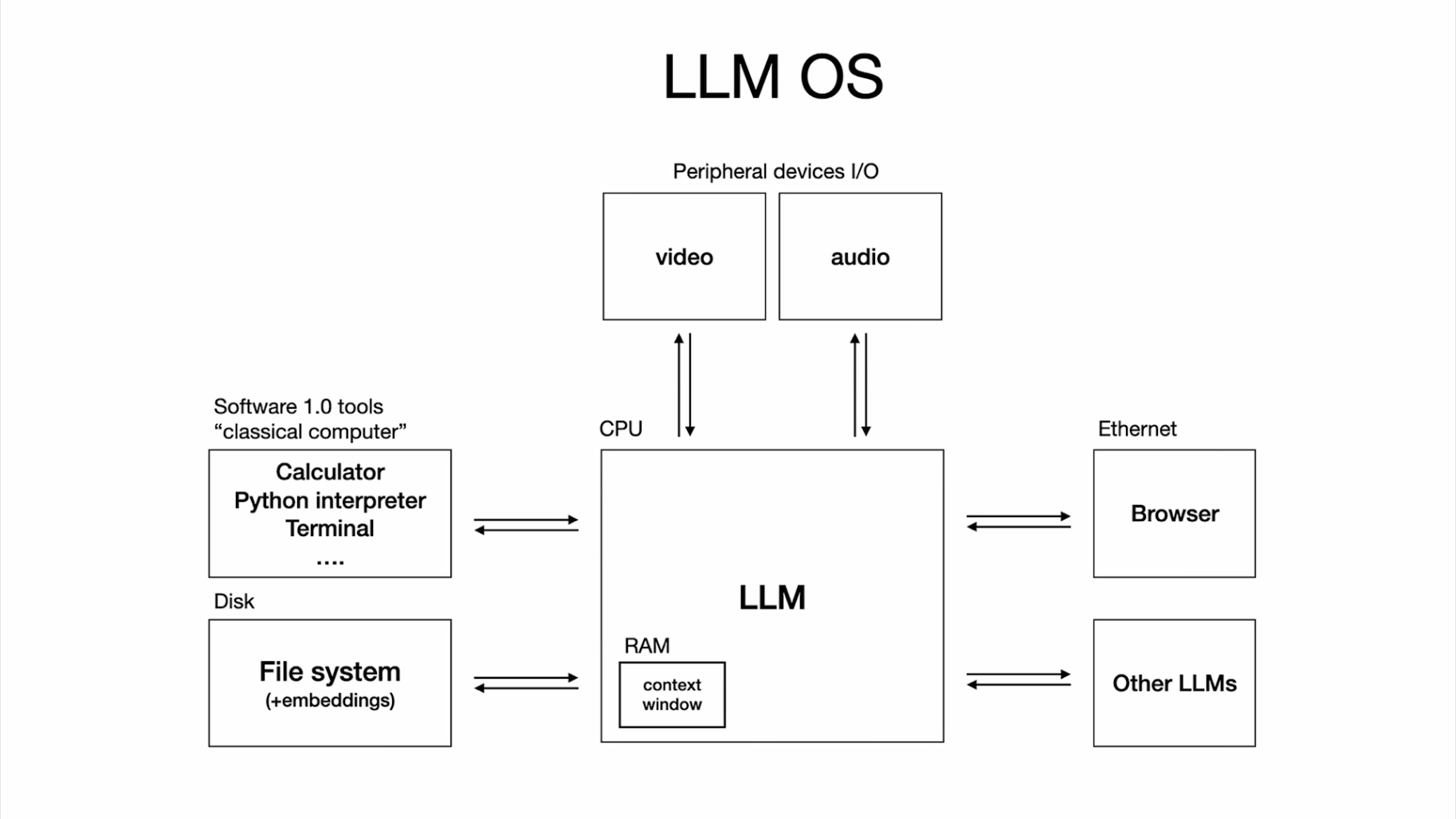

When I had this realization a while back, I tried to sketch it out, and it seemed to me like LLMs are like a new operating system, right? So the LLM is a new kind of computer. It's like the CPU equivalent. The context windows are like the memory, and then the LLM is orchestrating memory and compute for problem-solving using all of these capabilities here. Definitely if you look at it, it looks very much like an operating system from that perspective.

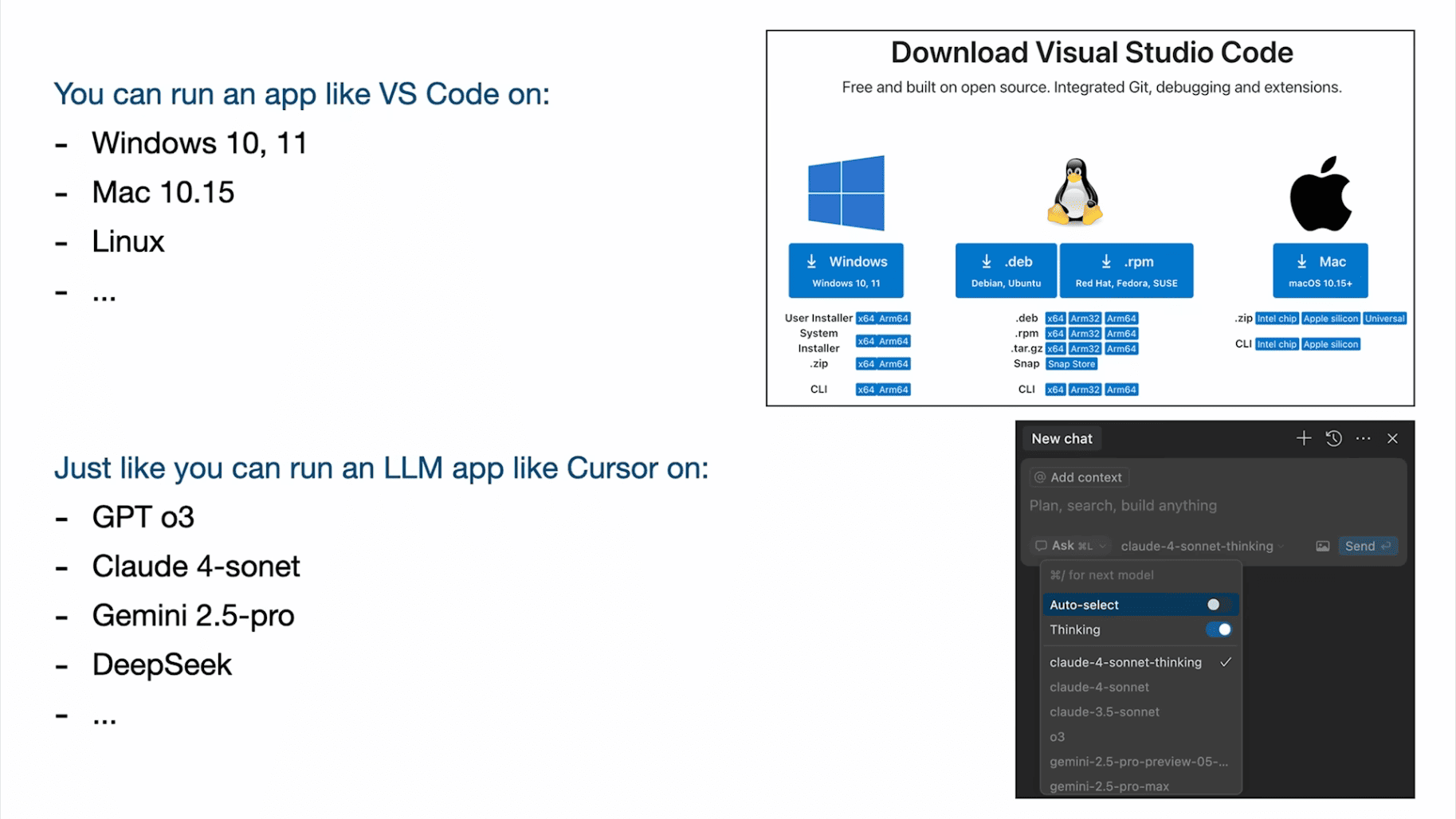

A few more analogies. For example, if you want to download an app, say I go to VS Code and I go to download, you can download VS Code and you can run it on Windows, Linux, or Mac. In the same way, you can take an LLM app like Cursor and you can run it on GPT or Claude or Gemini series, right? It's just a dropdown. So it's similar in that way as well.

More analogies that strike me is that we're in this 1960s-ish era where LLM compute is still very expensive for this new kind of computer, and that forces the LLMs to be centralized in the cloud, and we're all thin clients that interact with it over the network, and none of us have full utilization of these computers, and therefore it makes sense to use time-sharing where we're all a dimension of the batch when they're running the computer in the cloud.

This is very much what computers used to look like during this time. The operating systems were in the cloud. Everything was streamed around, and there was batching. So the personal computing revolution hasn't happened yet because it's just not economical. It doesn't make sense. But I think some people are trying.

It turns out that Mac Minis, for example, are a very good fit for some of the LLMs because if you're doing batch one inference, this is all super memory-bound. So this actually works. I think these are some early indications maybe of personal computing. But this hasn't really happened yet. It's not clear what this looks like. Maybe some of you get to invent what this is or how it works or what this should be.

Maybe one more analogy that I'll mention is whenever I talk to ChatGPT or some LLM directly in text, I feel like I'm talking to an operating system through the terminal. It's just text. It's direct access to the operating system. And I think a GUI hasn't yet really been invented in a general way. Should ChatGPT have a GUI different than just text bubbles? Certainly some of the apps that we're going to go into in a bit have GUIs, but there's no GUI across all the tasks if that makes sense.

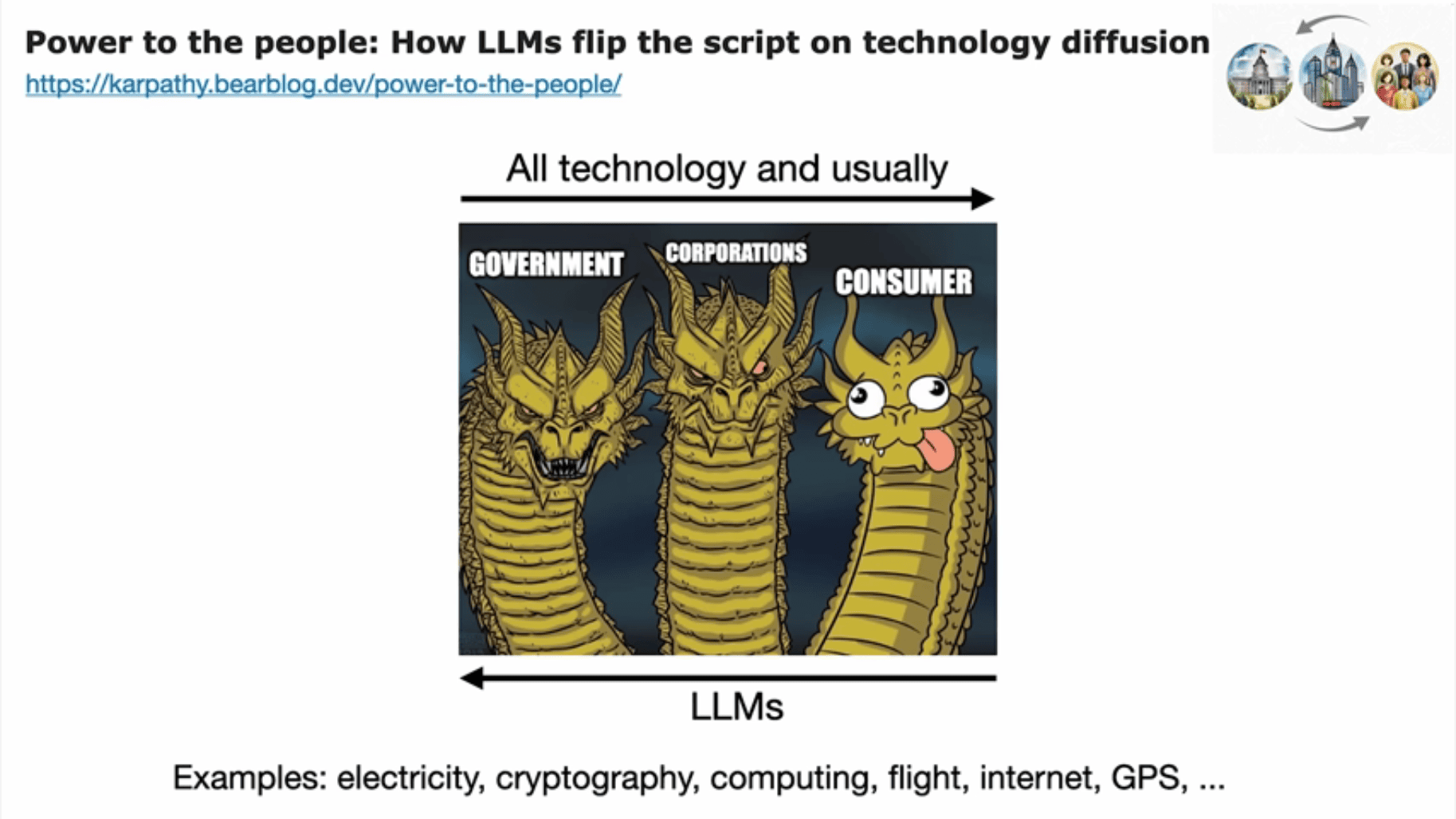

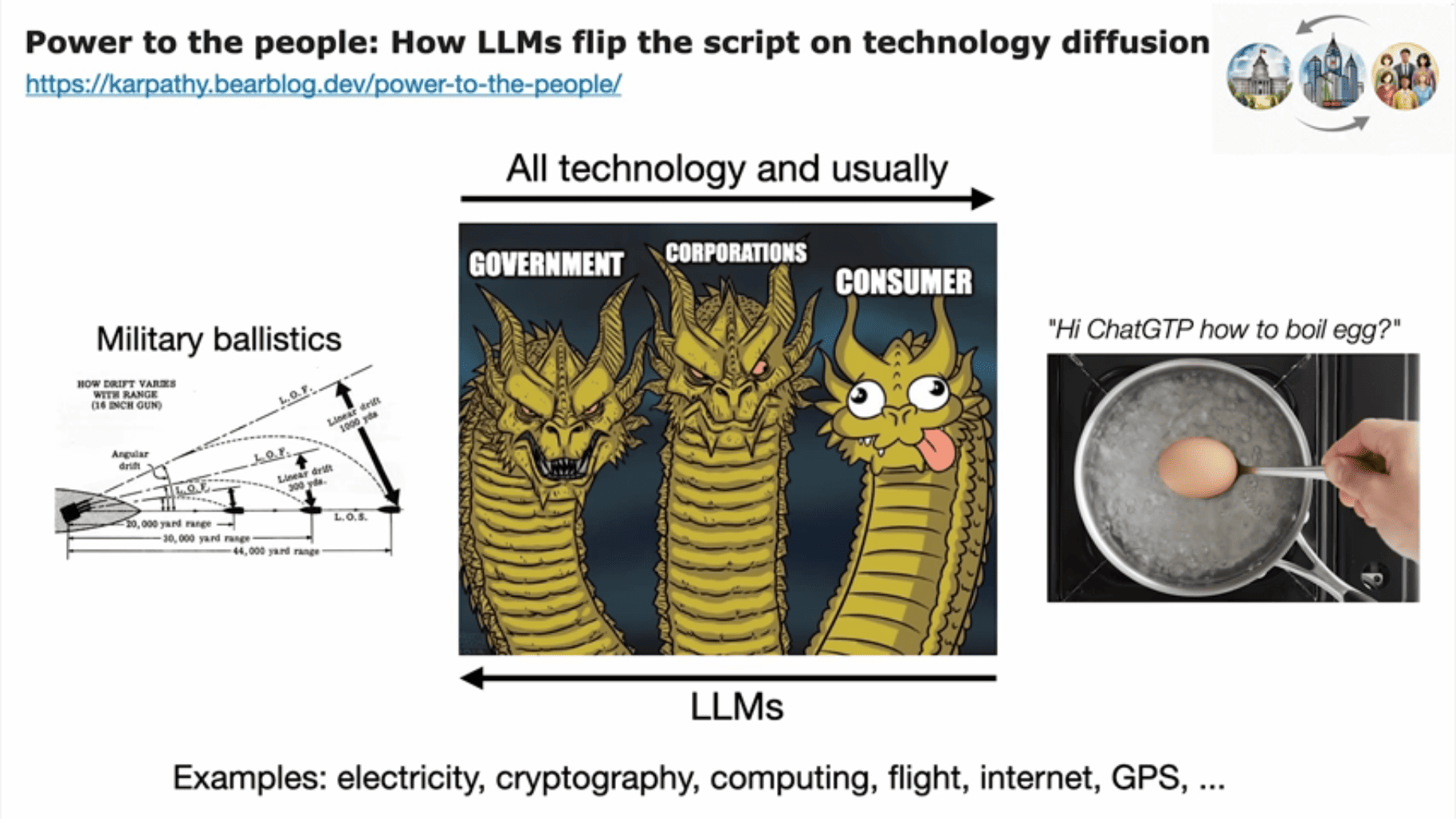

There are some ways in which LLMs are different from operating systems in some fairly unique way and from early computing. I wrote about this one particular property that strikes me as very different this time around. It's that LLMs flip — they flip the direction of technology diffusion that is usually present in technology.

For example, with electricity, cryptography, computing, flight, internet, GPS — lots of new transformative technologies that have been around. Typically, it is the government and corporations that are the first users because it's new and expensive, etc., and it only later diffuses to consumer. But I feel like LLMs are flipped around.

So maybe with early computers, it was all about ballistics and military use, but with LLMs, it's all about how do you boil an egg or something like that. This is certainly a lot of my use. So it's really fascinating to me that we have a new magical computer, and it's helping me boil an egg. It's not helping the government do something really crazy like some military ballistics or some special technology.

Indeed, corporations and governments are lagging behind the adoption of all of us, of all of these technologies. So it's just backwards, and I think it informs maybe some of the uses of how we want to use this technology or where are some of the first apps and so on.

So, in summary so far:

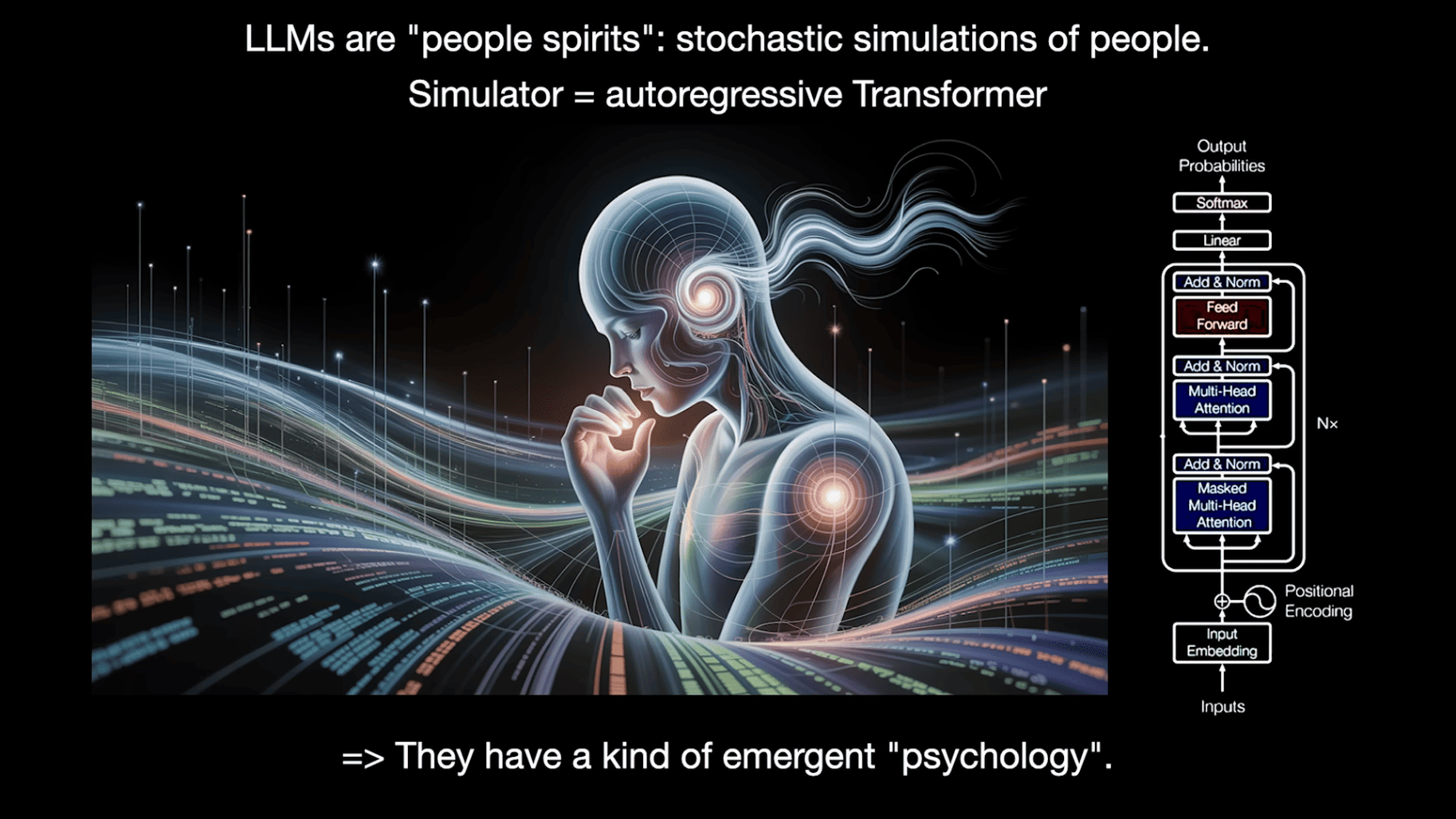

Before we program LLMs, we have to spend some time to think about what these things are. I especially like to talk about their psychology. The way I like to think about LLMs is that they're like people spirits. They are stochastic simulations of people. The simulator in this case happens to be an autoregressive transformer.

So transformer is a neural net, and it just goes on the level of tokens. It goes chunk, chunk, chunk, chunk, chunk. And there's an almost equal amount of compute for every single chunk. This simulator is basically there are some weights involved, and we fit it to all of text that we have on the internet and so on. And you end up with this kind of simulator. And because it is trained on humans, it's got this emergent psychology that is human-like.

So the first thing you'll notice is, of course, LLMs have encyclopedic knowledge and memory. And they can remember lots of things, a lot more than any single individual human can because they read so many things.

It actually reminds me of this movie Rain Man, which I actually really recommend people watch. It's an amazing movie. I love this movie. And Dustin Hoffman here is an autistic savant who has almost perfect memory. So he can read a phone book and remember all of the names and phone numbers. I feel like LLMs are very similar. They can remember SHA hashes and lots of different kinds of things very, very easily.

So they certainly have superpowers in some respects. But they also have a bunch of cognitive deficits. So they hallucinate quite a bit. And they make up stuff and don't have a very good internal model of self-knowledge, not sufficient at least. And this has gotten better but not perfect.

They display jagged intelligence. So they're going to be superhuman in some problem-solving domains, and then they're going to make mistakes that no human will make, like they will insist that 9.11 is greater than 9.9 or that there are two R's in "strawberry" — these are some famous examples, but there are rough edges that you can trip on. So that's also kind of unique.

They also suffer from retrograde amnesia, and I think I'm alluding to the fact that if you have a coworker who joins your organization, this coworker will over time learn your organization, and they will understand and gain a huge amount of context on the organization, and they go home and they sleep and they consolidate knowledge and they develop expertise over time.

LLMs don't natively do this, and this is not something that has really been solved in the R&D of LLMs. So context windows are really like working memory, and you have to program the working memory quite directly because they don't just get smarter by default. And I think a lot of people get tripped up by the analogies in this way.

In popular culture, I recommend people watch these two movies: Memento and 50 First Dates. In both of these movies, the protagonists, their weights are fixed, and their context windows get wiped every single morning, and it's really problematic to go to work or have relationships when this happens, and this happens all the time.

I guess one more thing I would point to is security-related limitations of the use of LLMs. So for example, LLMs are quite gullible. They are susceptible to prompt injection risks. They might leak your data, etc. And there are many other considerations security-related.

So basically long story short, you have to simultaneously think through this superhuman thing that has a bunch of cognitive deficits and issues. They are extremely useful, and so how do we program them and how do we work around their deficits and enjoy their superhuman powers?

What I want to switch to now is talk about the opportunities of how do we use these models and what are some of the biggest opportunities. This is not a comprehensive list, just some of the things that I thought were interesting for this talk.

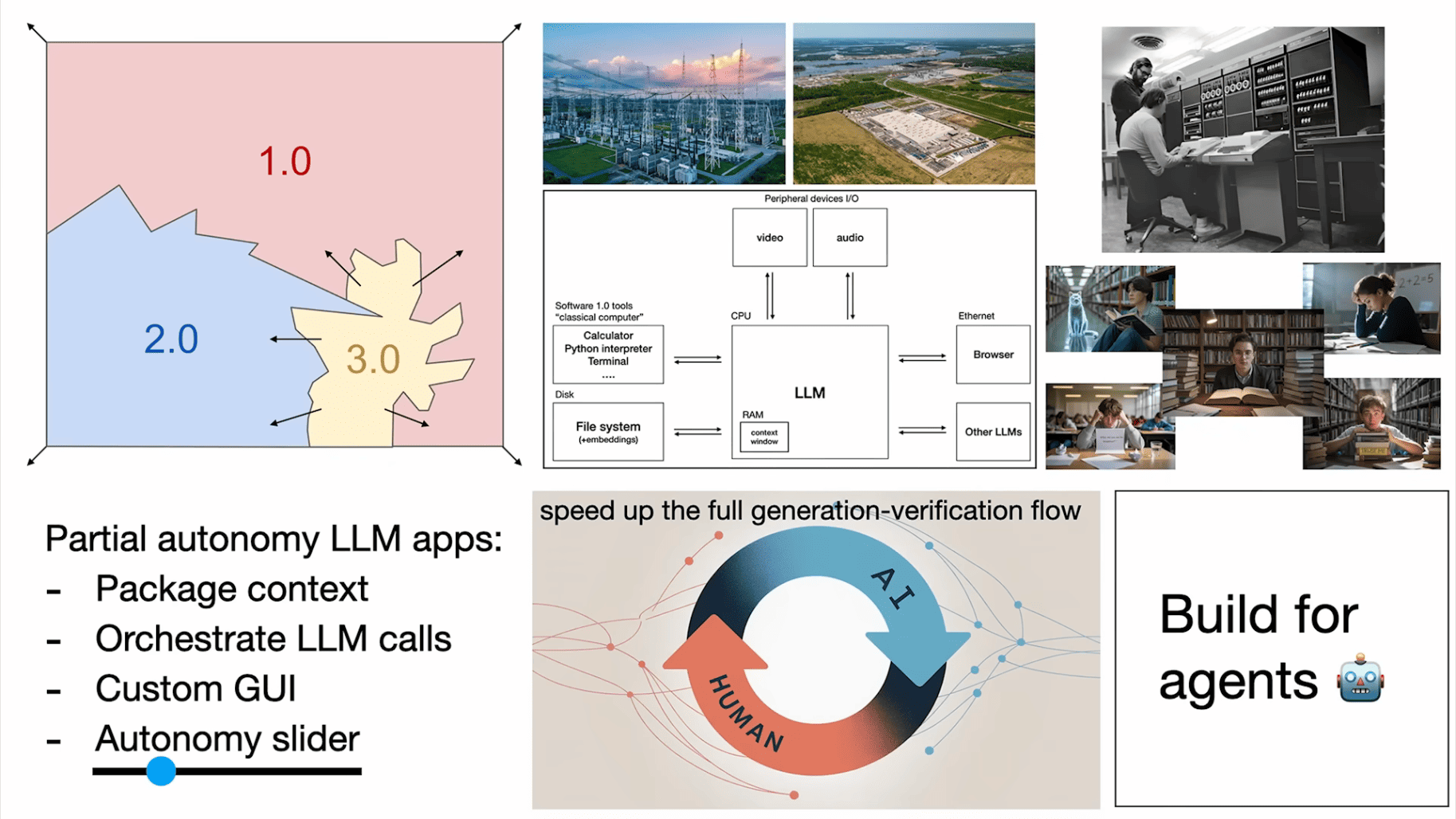

The first thing I'm excited about is what I would call partial autonomy apps. For example, let's work with the example of coding. You can certainly go to ChatGPT directly and you can start copy-pasting code around and copy-pasting bug reports and stuff around and getting code and copy-pasting everything around. Why would you do that? Why would you go directly to the operating system? It makes a lot more sense to have an app dedicated for this.

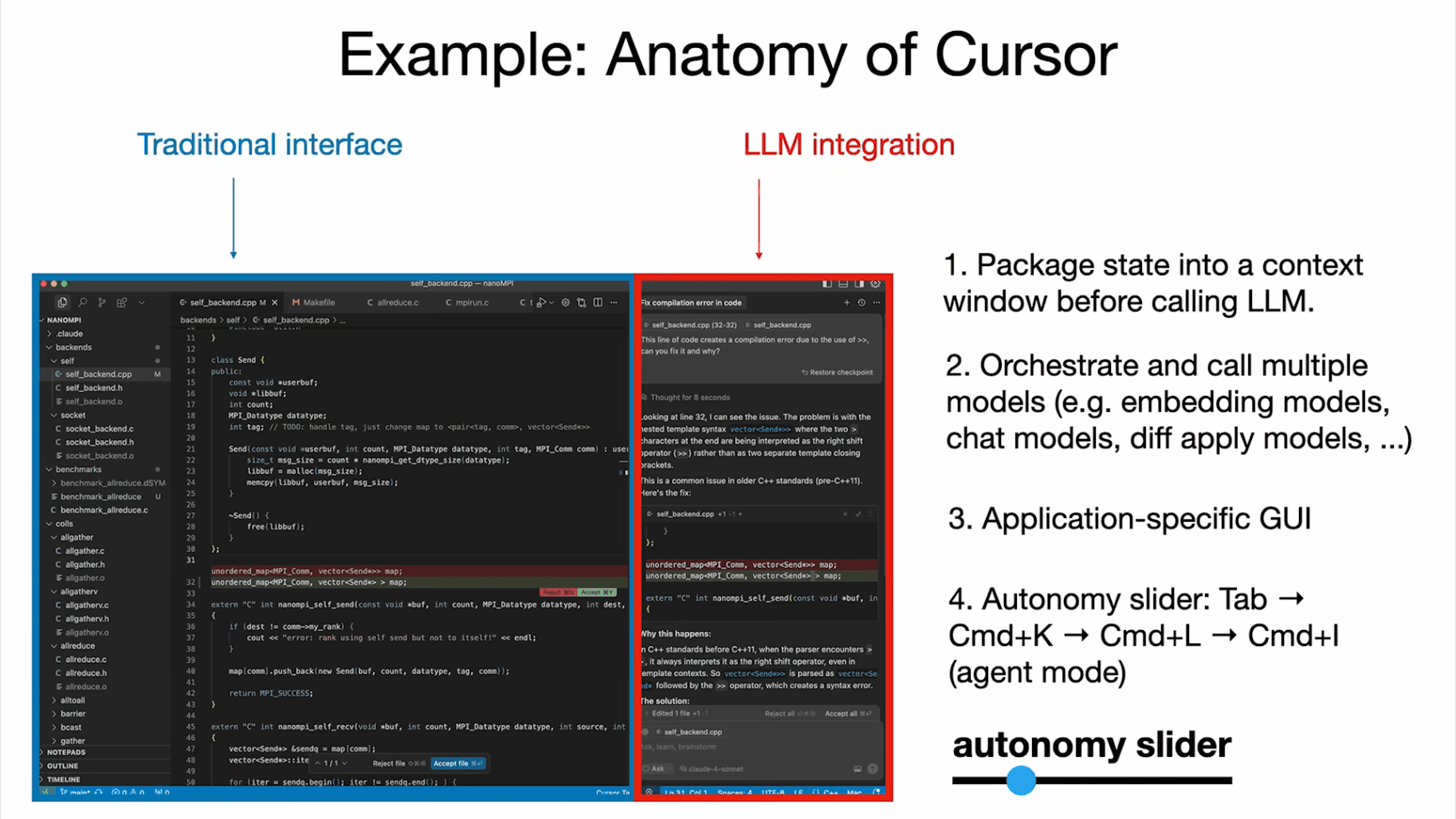

And so I think many of you use Cursor. I do as well. And Cursor is the thing you want instead. You don't want to just directly go to the ChatGPT app. And I think Cursor is a very good example of an early LLM app that has a bunch of properties that I think are useful across all the LLM apps.

So in particular, you will notice that we have a traditional interface that allows a human to go in and do all the work manually just as before. But in addition to that, we now have this LLM integration that allows us to go in bigger chunks.

And so some of the properties of LLM apps that I think are shared and useful to point out:

1The LLMs do a ton of the context management.

2They orchestrate multiple calls to LLMs, right? So in the case of Cursor, there's under the hood embedding models for all your files, the actual chat models, models that apply diffs to the code, and this is all orchestrated for you.

3A really big one that I think also maybe not fully appreciated always is application-specific GUI and the importance of it. Because you don't just want to talk to the operating system directly in text. Text is very hard to read, interpret, understand, and also you don't want to take some of these actions natively in text. So it's much better to just see a diff as red and green change and you can see what's being added or subtracted. It's much easier to just do

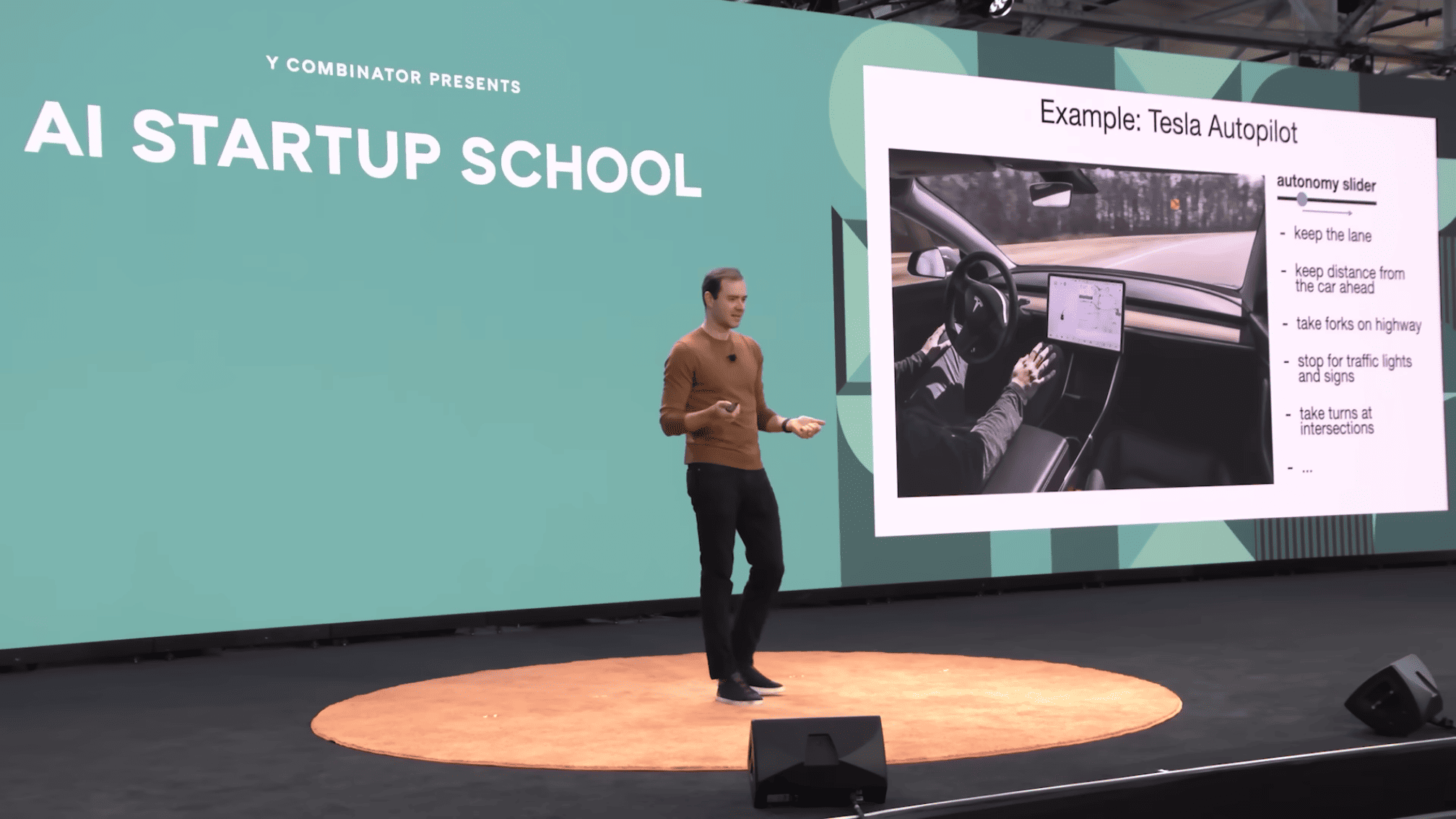

Command+Y to accept or Command+N to reject. I shouldn't have to type it in text, right? So a GUI allows a human to audit the work of these fallible systems and to go faster.4The last feature I want to point out is that there's what I call the autonomy slider. So, for example, in Cursor, you can just do tab completion. You're mostly in charge. You can select a chunk of code and

Command+K to change just that chunk of code. You can do Command+L to change the entire file. Or you can do Command+I which just let it rip, do whatever you want in the entire repo, and that's the sort of full autonomy agentic version.And so you are in charge of the autonomy slider, and depending on the complexity of the task at hand, you can tune the amount of autonomy that you're willing to give up for that task.

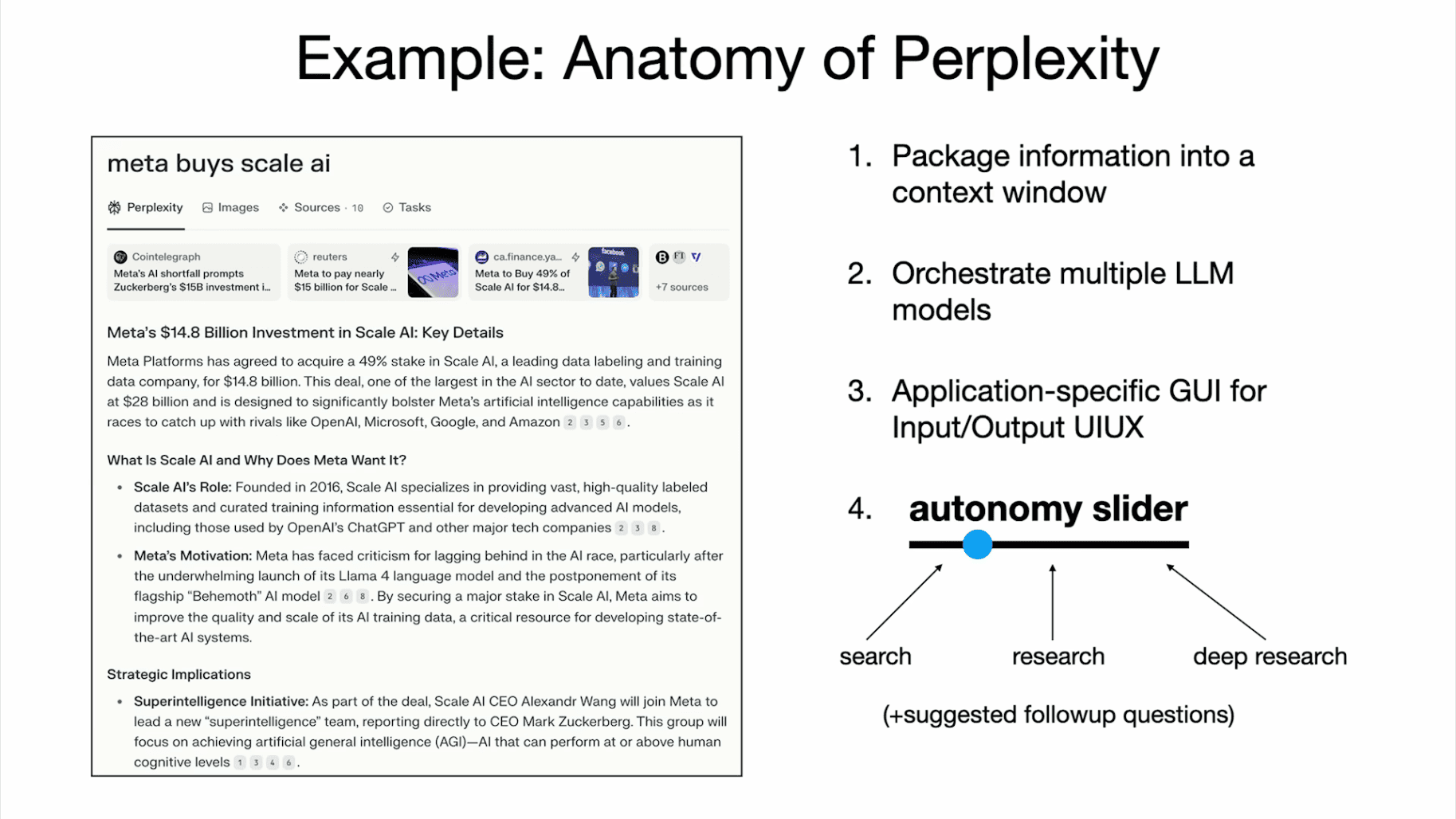

Maybe to show one more example of a fairly successful LLM app, Perplexity. It also has very similar features to what I've just pointed out in Cursor. It packages up a lot of the information. It orchestrates multiple LLMs. It's got a GUI that allows you to audit some of its work. So, for example, it will cite sources and you can imagine inspecting them. And it's got an autonomy slider. You can either just do a quick search or you can do research or you can do deep research and come back 10 minutes later. So this is all just varying levels of autonomy that you give up to the tool.

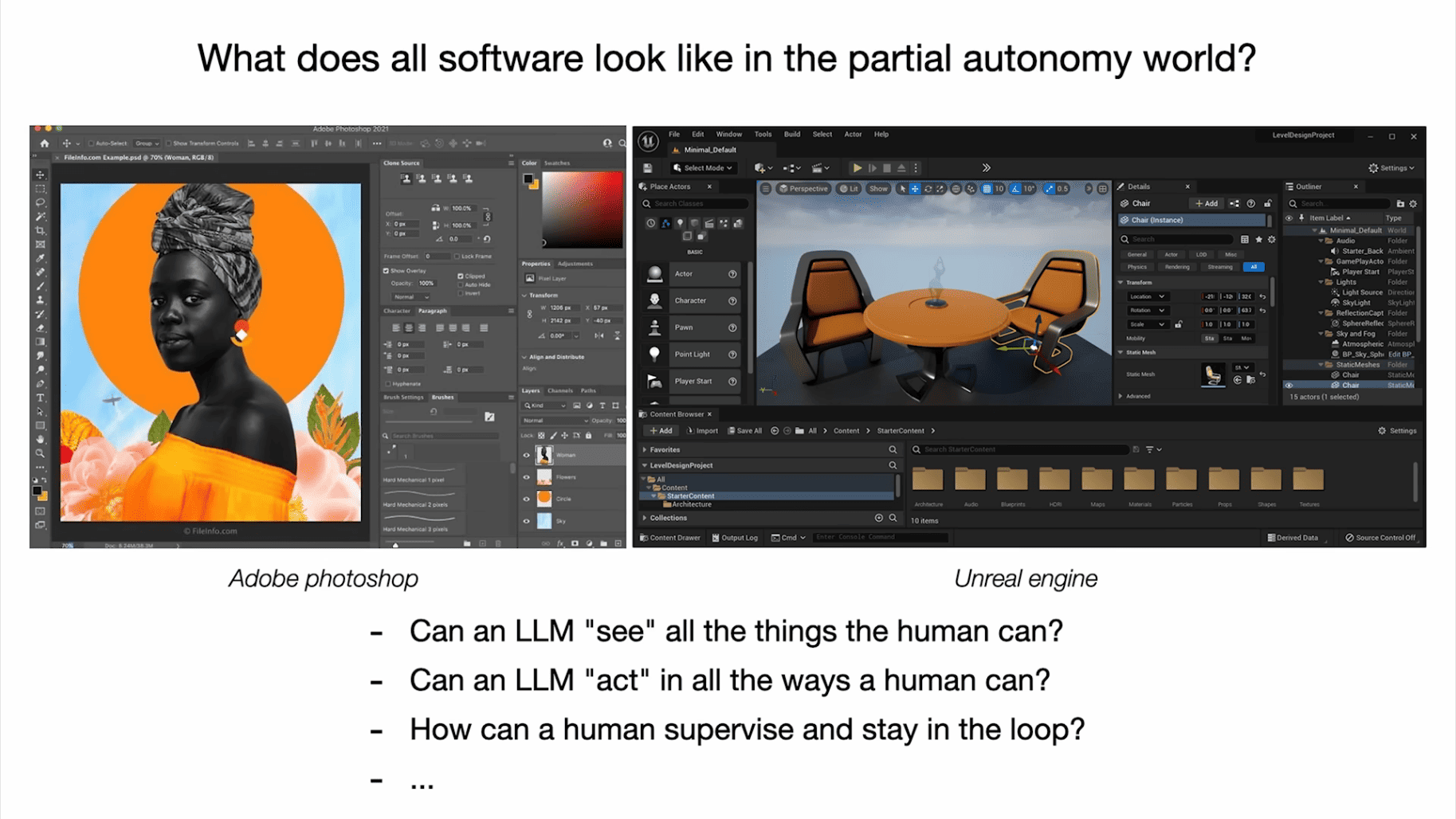

So, I guess my question is: I feel like a lot of software will become partially autonomous. I'm trying to think through what does that look like? And for many of you who maintain products and services, how are you going to make your products and services partially autonomous? Can an LLM see everything that a human can see? Can an LLM act in all the ways that a human could act? And can humans supervise and stay in the loop of this activity?

Because again, these are fallible systems that aren't yet perfect. And what does a diff look like in Photoshop or something like that? You know, and also a lot of the traditional software right now, it has all these switches and all this stuff that's all designed for human. All of this has to change and become accessible to LLMs.

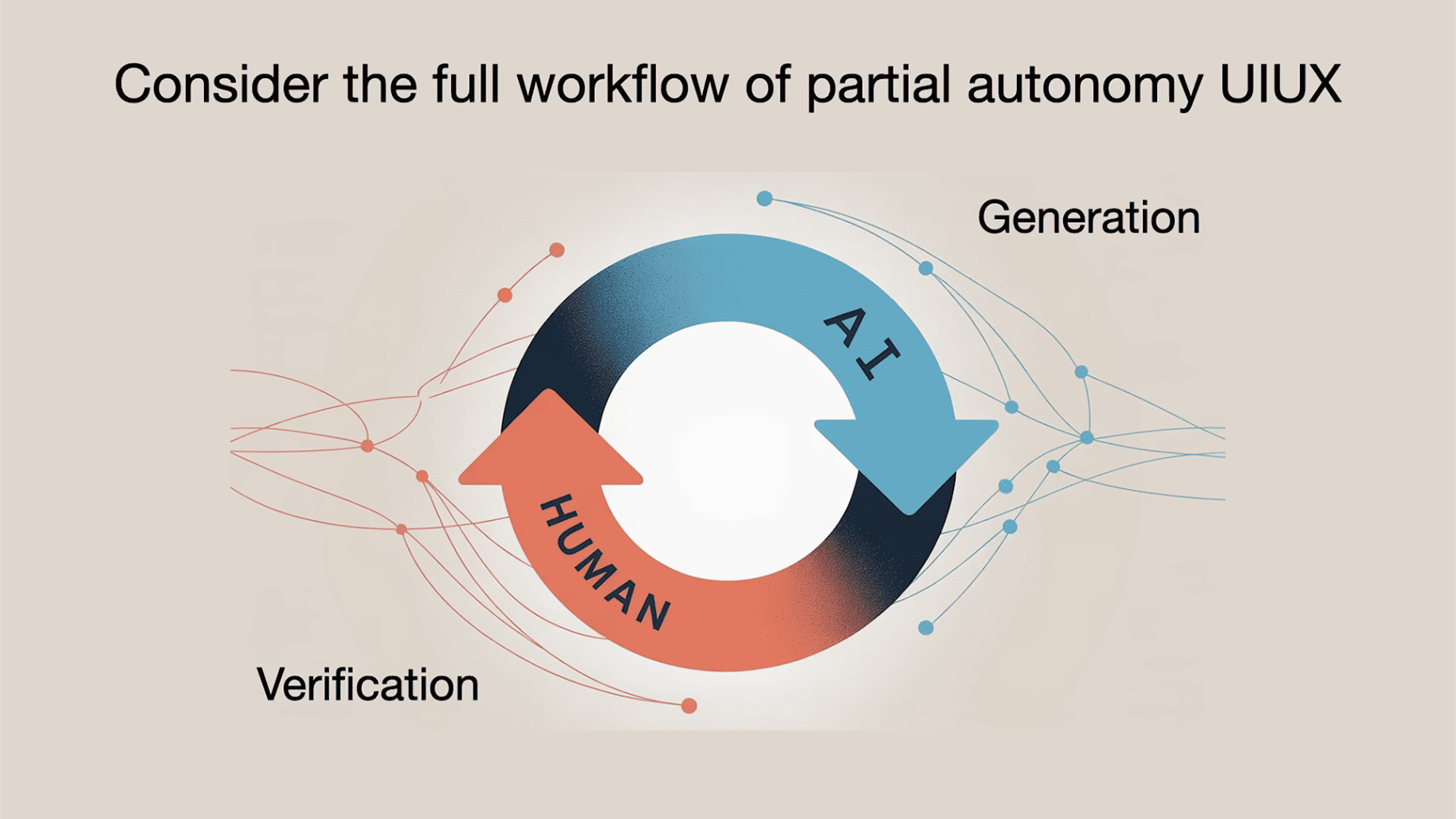

One thing I want to stress with a lot of these LLM apps that I'm not sure gets as much attention as it should is we're now cooperating with AIs, and usually they are doing the generation and we as humans are doing the verification. It is in our interest to make this loop go as fast as possible so we're getting a lot of work done.

There are two major ways that I think this can be done:

1You can speed up verification a lot. And I think GUIs, for example, are extremely important to this because a GUI utilizes your computer vision GPU in all of our head. Reading text is effortful and it's not fun, but looking at stuff is fun and it's just a highway to your brain. So I think GUIs are very useful for auditing systems and visual representations in general.

2I would say is we have to keep the AI on the leash. I think a lot of people are getting way over-excited with AI agents, and it's not useful to me to get a diff of 10,000 lines of code to my repo. I'm still the bottleneck, right? Even though that 10,000 lines come out instantly, I have to make sure that this thing is not introducing bugs. And that it's doing the correct thing, right? And that there's no security issues and so on.

We have to make the flow of these two go very, very fast, and we have to somehow keep the AI on the leash because it gets way too overreactive. This is how I feel when I do AI-assisted coding. If I'm just bite coding, everything is nice and great, but if I'm actually trying to get work done, it's not so great to have an overreactive agent doing all this stuff.

I'm trying to develop, like many of you, some ways of utilizing these agents in my coding workflow and to do AI-assisted coding. And in my own work, I'm always scared to get way too big diffs. I always go in small incremental chunks. I want to make sure that everything is good. I want to spin this loop very, very fast, and I work on small chunks of single concrete thing. And so I think many of you probably are developing similar ways of working with the LLMs.

"AI-assisted coding" workflows (very rapidly evolving...):

don't ask for code, ask for approaches

I also saw a number of blog posts that try to develop these best practices for working with LLMs. And here's one that I read recently and I thought was quite good. And it discussed some techniques, and some of them have to do with how you keep the AI on the leash.

And so, as an example, if your prompt is vague, then the AI might not do exactly what you wanted, and in that case, verification will fail. You're going to ask for something else. If verification fails, then you're going to start spinning. So it makes a lot more sense to spend a bit more time to be more concrete in your prompts, which increases the probability of successful verification, and you can move forward. And so I think a lot of us are going to end up finding techniques like this.

Here's an example. This prompt is not unreasonable but not particularly thoughtful:

1Write a Python rate limiter that limits users to 10 requests per minute.

I would expect this prompt to give okay results, but also miss some edge cases, good practices and quality standards. This is how you might see someone at nilenso prompt an AI for the same task:

1Implement a token bucket rate limiter in Python with the following requirements: 2 3– 10 requests per minute per user (identified by `user_id` string) 4– Thread-safe for concurrent access 5– Automatic cleanup of expired entries 6– Return tuple of (allowed: bool, retry_after_seconds: int) 7 8Consider: 9– Should tokens refill gradually or all at once? 10– What happens when the system clock changes? 11– How to prevent memory leaks from inactive users? 12 13Prefer simple, readable implementation over premature optimization. Use stdlib only (no Redis/external deps).

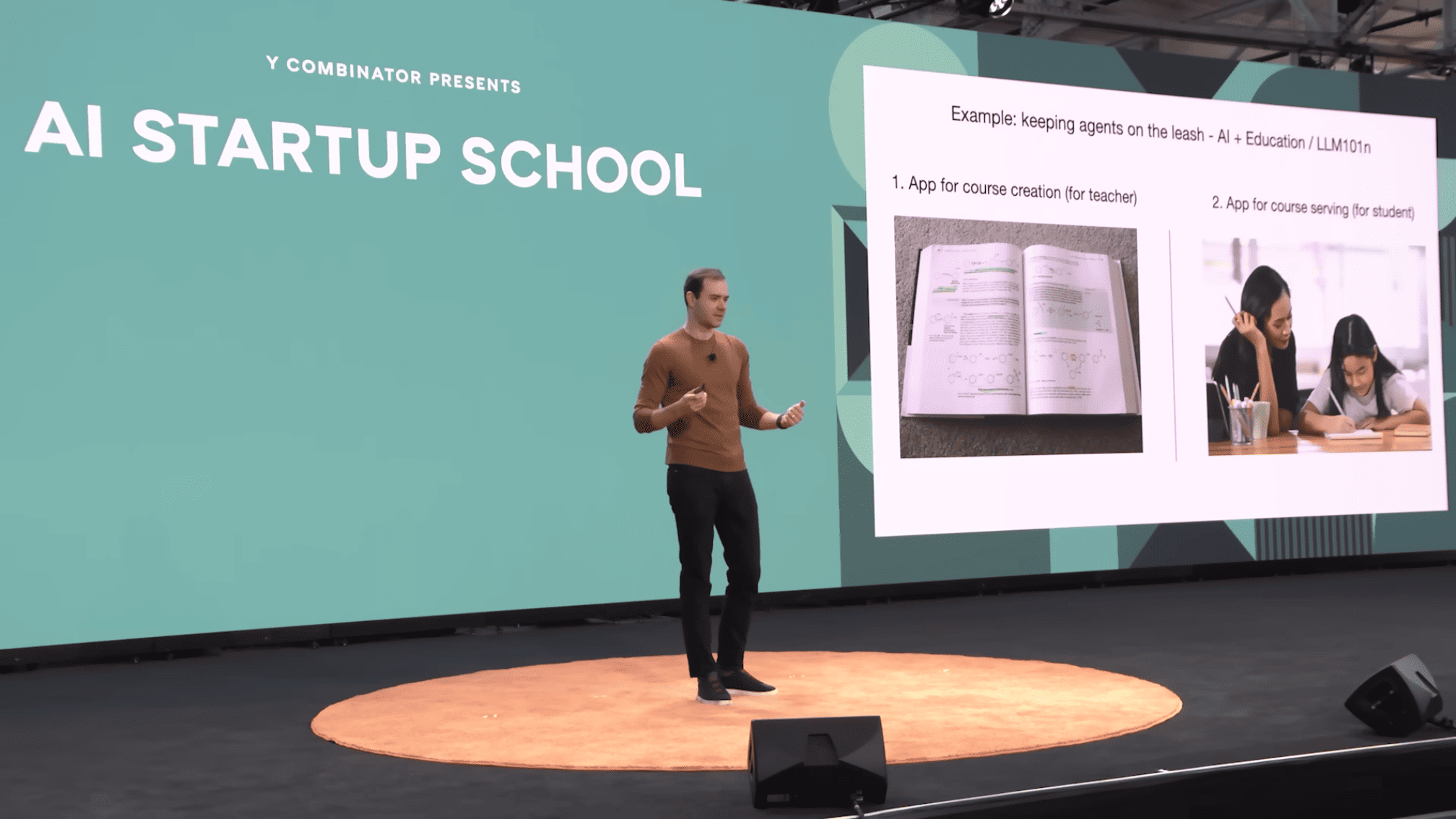

I think in my own work as well, I'm currently interested in what education looks like together with now that we have AI and LLMs, what does education look like? And I think a large amount of thought for me goes into how we keep AI on the leash.

I don't think it just works to go to chat and be like, "Hey, teach me physics." I don't think this works because the AI gets lost in the woods. And so for me, this is actually two separate apps. For example, there's an app for a teacher that creates courses, and then there's an app that takes courses and serves them to students. And in both cases, we now have this intermediate artifact of a course that is auditable, and we can make sure it's good. We can make sure it's consistent, and the AI is kept on the leash with respect to a certain syllabus, a certain progression of projects and so on.

And so this is one way of keeping the AI on leash, and I think has a much higher likelihood of working, and the AI is not getting lost in the woods.

One more analogy I wanted to allude to is I'm no stranger to partial autonomy, and I worked on this I think for five years at Tesla, and this is also a partial autonomy product and shares a lot of the features. Like for example, right there in the instrument panel is the GUI of the autopilot, so it's showing me what the neural network sees and so on, and we have the autonomy slider where over the course of my tenure there, we did more and more autonomous tasks for the user.

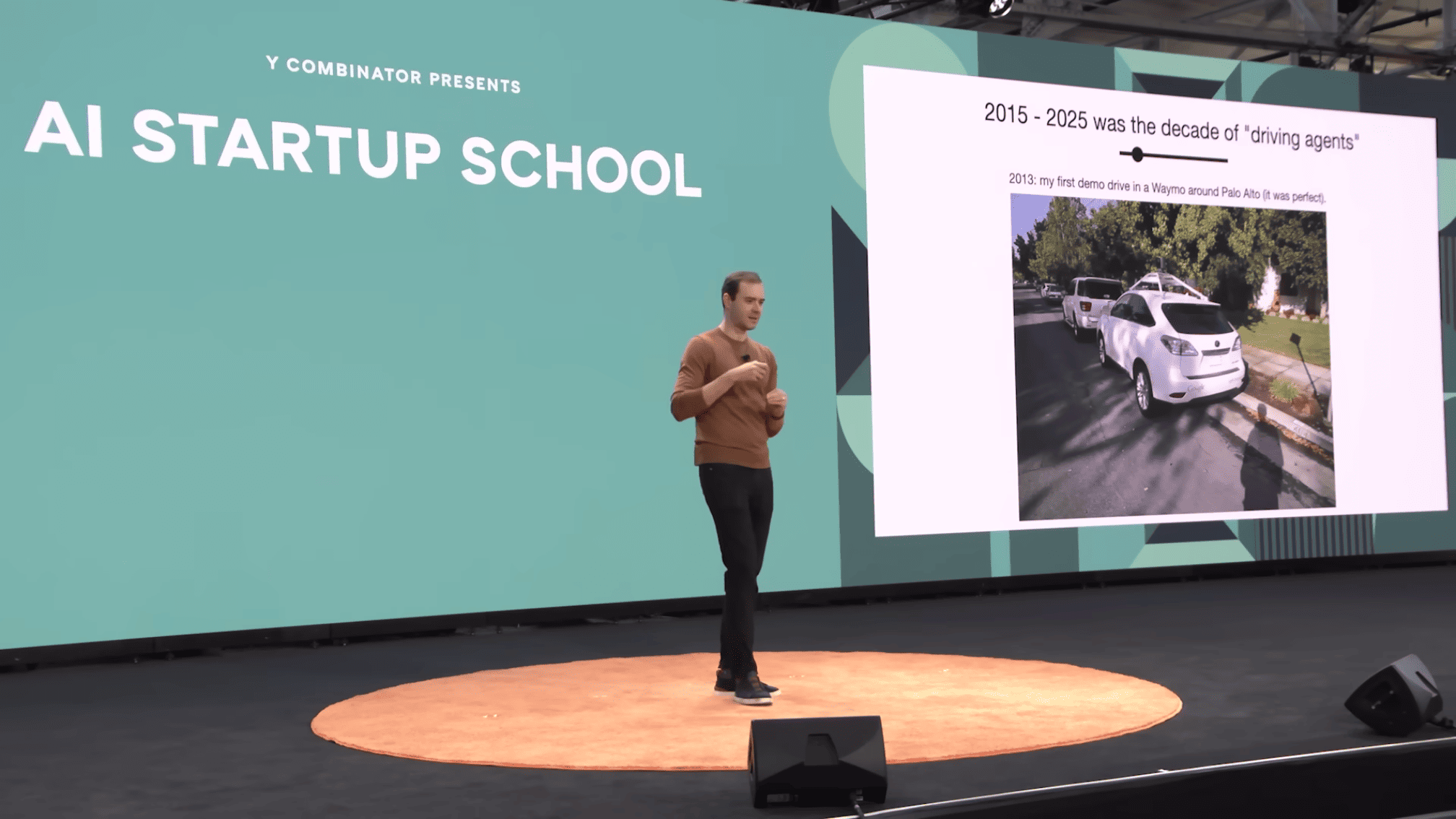

And maybe the story that I wanted to tell very briefly is actually the first time I drove a self-driving vehicle was in 2013, and I had a friend who worked at Waymo, and he offered to give me a drive around Palo Alto. I took this picture using Google Glass at the time, and many of you are so young that you might not even know what that is. But yeah, this was all the rage at the time.

And we got into this car and we went for about a 30-minute drive around Palo Alto highways, streets and so on. And this drive was perfect. There was zero interventions, and this was 2013, which is now 12 years ago. And it struck me because at the time when I had this perfect drive, this perfect demo, I felt like, "Wow, self-driving is imminent because this just worked. This is incredible."

But here we are 12 years later and we are still working on autonomy. We are still working on driving agents, and even now we haven't actually really solved the problem. You may see Waymos going around and they look driverless, but you know there's still a lot of teleoperation and a lot of human in the loop of a lot of this driving, so we still haven't even declared success, but I think it's definitely going to succeed at this point, but it just took a long time.

And so I think this is software is really tricky. I think in the same way that driving is tricky, and so when I see things like "Oh, 2025 is the year of agents," I get very concerned, and I feel like this is the decade of agents and this is going to be quite some time. We need humans in the loop. We need to do this carefully. This is software. Let's be serious here.

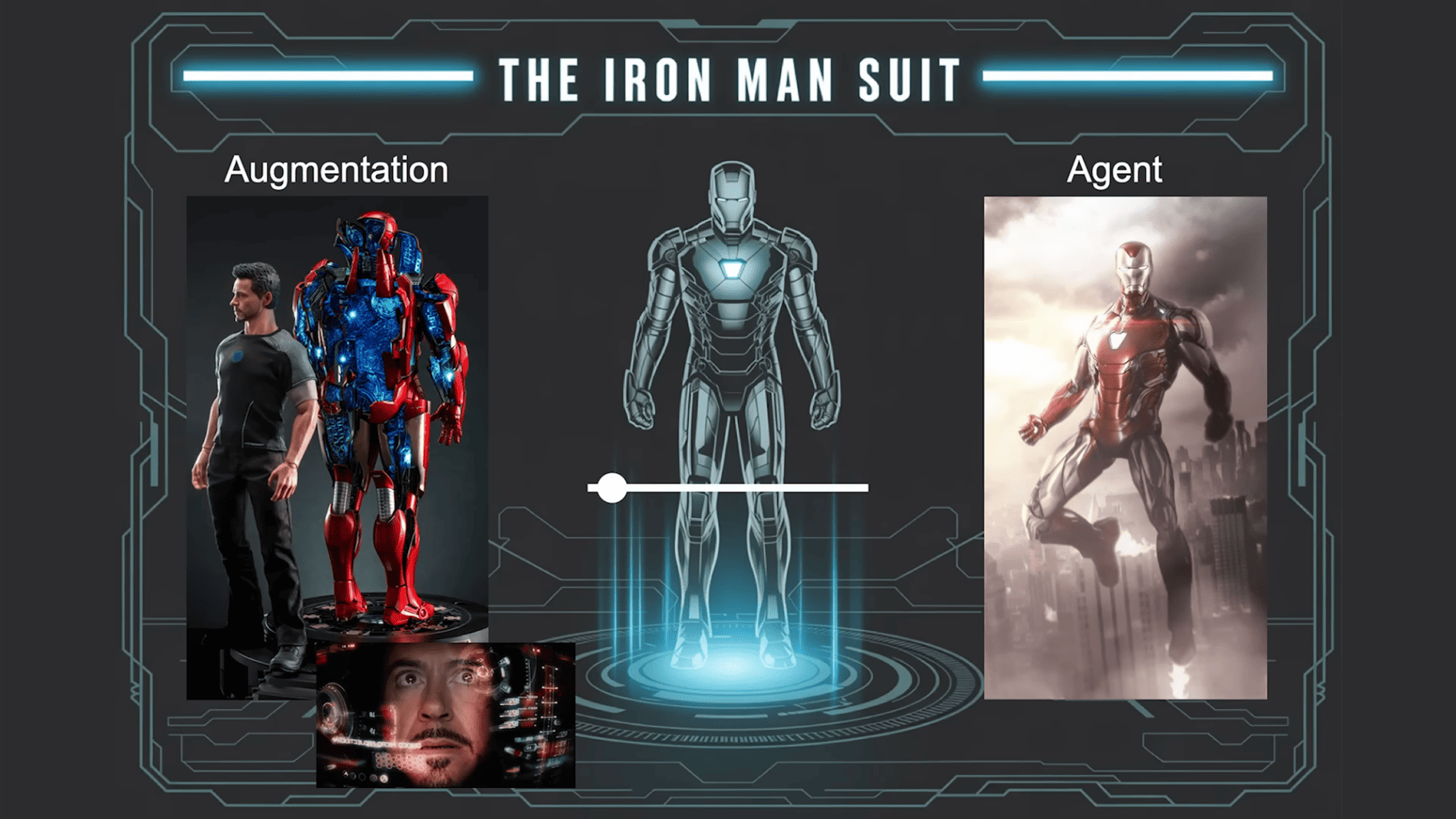

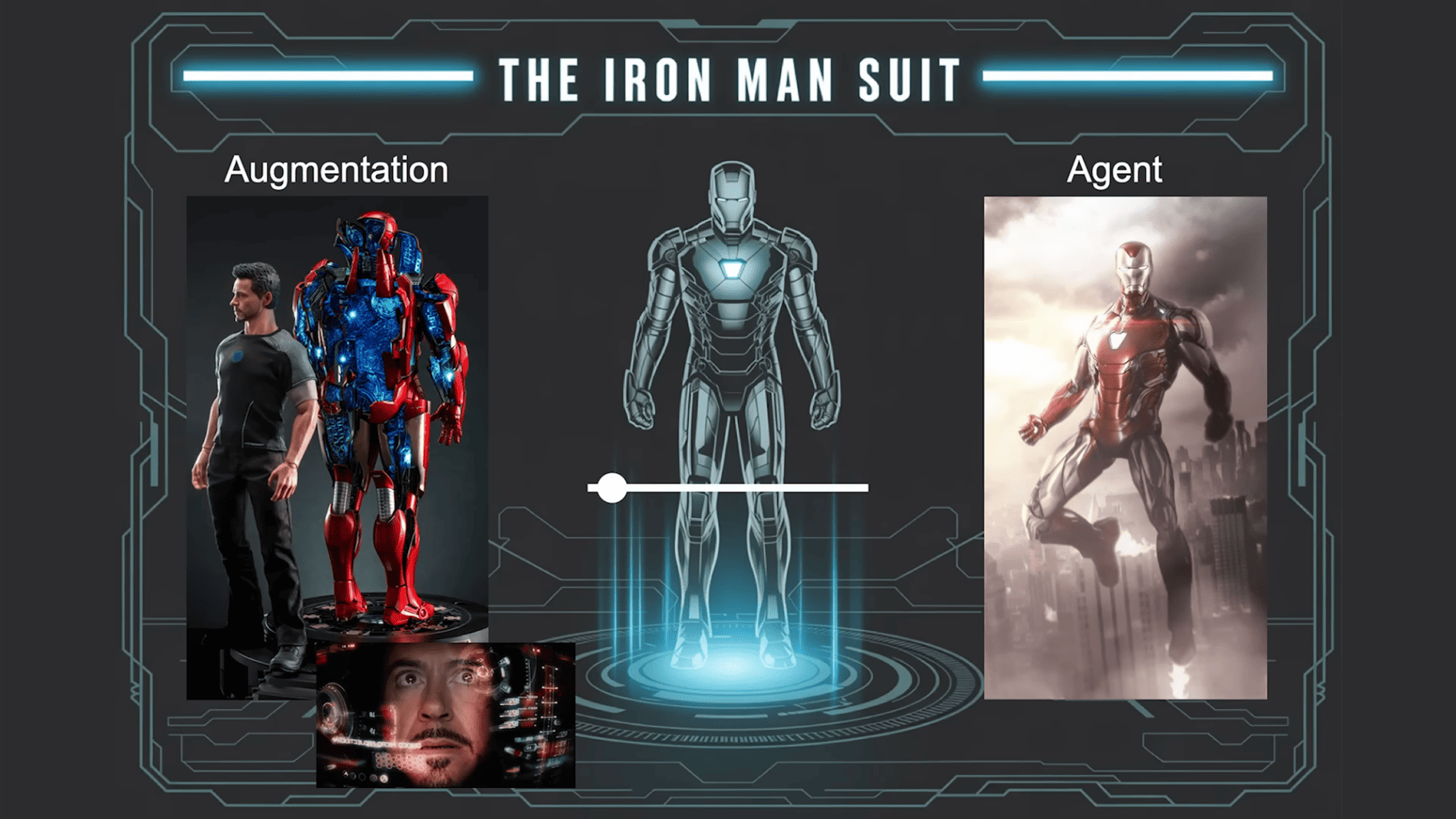

One more analogy that I always think through is the Iron Man suit. I always love Iron Man. I think it's so correct in a bunch of ways with respect to technology and how it will play out. And what I love about the Iron Man suit is that it's both an augmentation and Tony Stark can drive it, and it's also an agent. And in some of the movies, the Iron Man suit is quite autonomous and can fly around and find Tony and all this stuff.

And so this is the autonomy slider: we can build augmentations or we can build agents, and we kind of want to do a bit of both. But at this stage, I would say working with fallible LLMs and so on, I would say it's less Iron Man robots and more Iron Man suits that you want to build. It's less like building flashy demos of autonomous agents and more building partial autonomy products.

And these products have custom GUIs and UI/UX, and we're trying to do this so that the generation-verification loop of the human is very, very fast. But we are not losing sight of the fact that it is in principle possible to automate this work, and there should be an autonomy slider in your product, and you should be thinking about how you can slide that autonomy slider and make your product more autonomous over time. But this is how I think there's lots of opportunities in these kinds of products.

Building Autonomous Software

❌ Iron Man robots ❌ Flashy demos of autonomous agents ❌ AGI 2027

✅ Iron Man suits ✅ Partial autonomy products ✅ Custom GUI and UIUX ✅ Fast Generation - Verification loop ✅ Autonomy slider

I want to now switch gears a little bit and talk about one other dimension that I think is very unique. Not only is there a new type of programming language that allows for autonomy in software, but also, as I mentioned, it's programmed in English, which is this natural interface, and suddenly everyone is a programmer because everyone speaks natural language like English. So this is extremely bullish and very interesting to me and also completely unprecedented, I would say. It used to be the case that you need to spend five to 10 years studying something to be able to do something in software. This is not the case anymore.

Make software highly accessible 👶

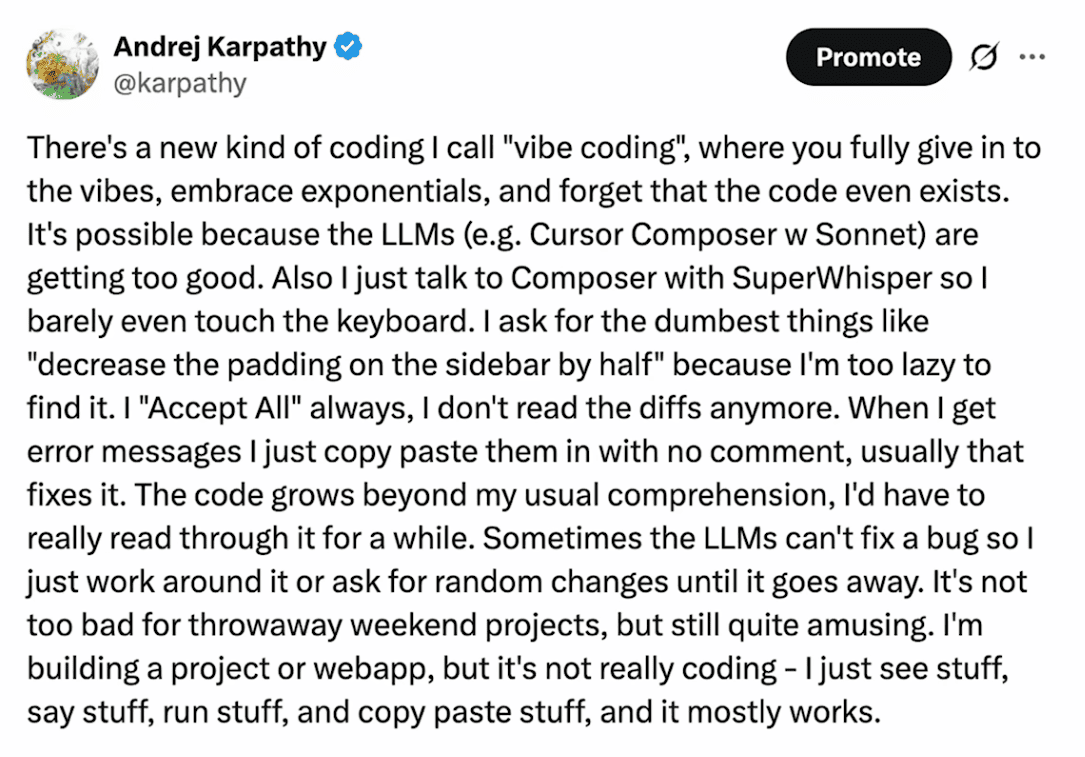

So, I don't know if by any chance anyone has heard of vibe coding. This is the tweet that introduced this, but I'm told that this is now a major meme. Fun story about this is that I've been on Twitter for like 15 years or something like that at this point, and I still have no clue which tweet will become viral and which tweet fizzles and no one cares. And I thought that this tweet was going to be the latter. I don't know. It was just a shower thought. But this became a total meme, and I really just can't tell. But I guess it struck a chord and it gave a name to something that everyone was feeling but couldn't quite say in words. So now there's a Wikipedia page and everything. This is a major contribution now or something like that.

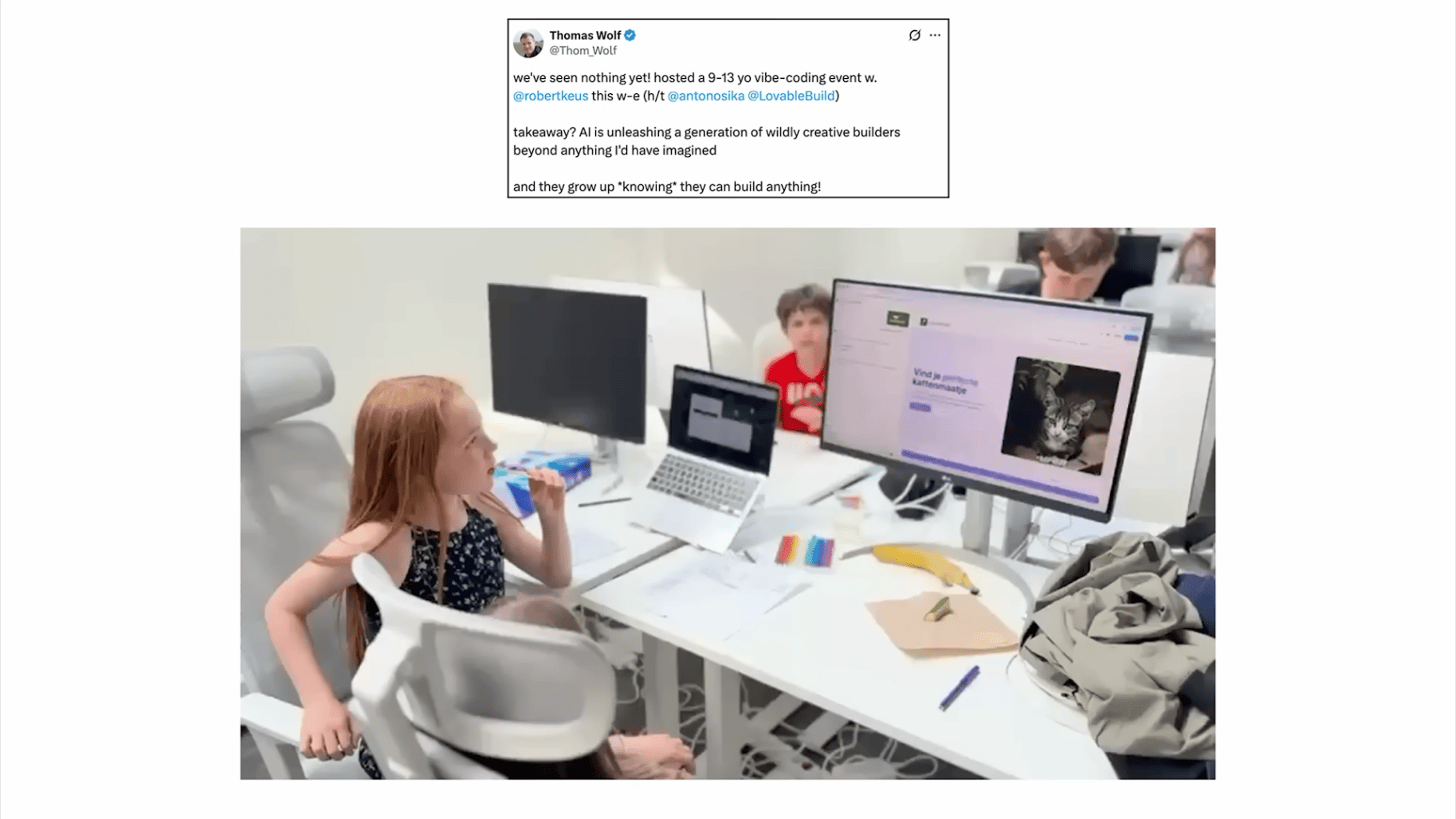

So Tom Wolf from Hugging Face shared this beautiful video that I really love. These are kids vibe coding. And I find that this is such a wholesome video. I love this video. How can you look at this video and feel bad about the future? The future is great. I think this will end up being a gateway drug to software development. I'm not a doomer about the future of the generation, and I think, yeah, I love this video.

So I tried vibe coding a little bit as well because it's so fun. Vibe coding is so great when you want to build something super duper custom that doesn't appear to exist and you just want to wing it because it's a Saturday or something like that.

So I built this iOS app, and I can't actually program in Swift, but I was really shocked that I was able to build a super basic app, and I'm not going to explain it. It's really dumb, but this was just a day of work, and this was running on my phone later that day, and I was like, "Wow, this is amazing." I didn't have to read through Swift for five days or something like that to get started.

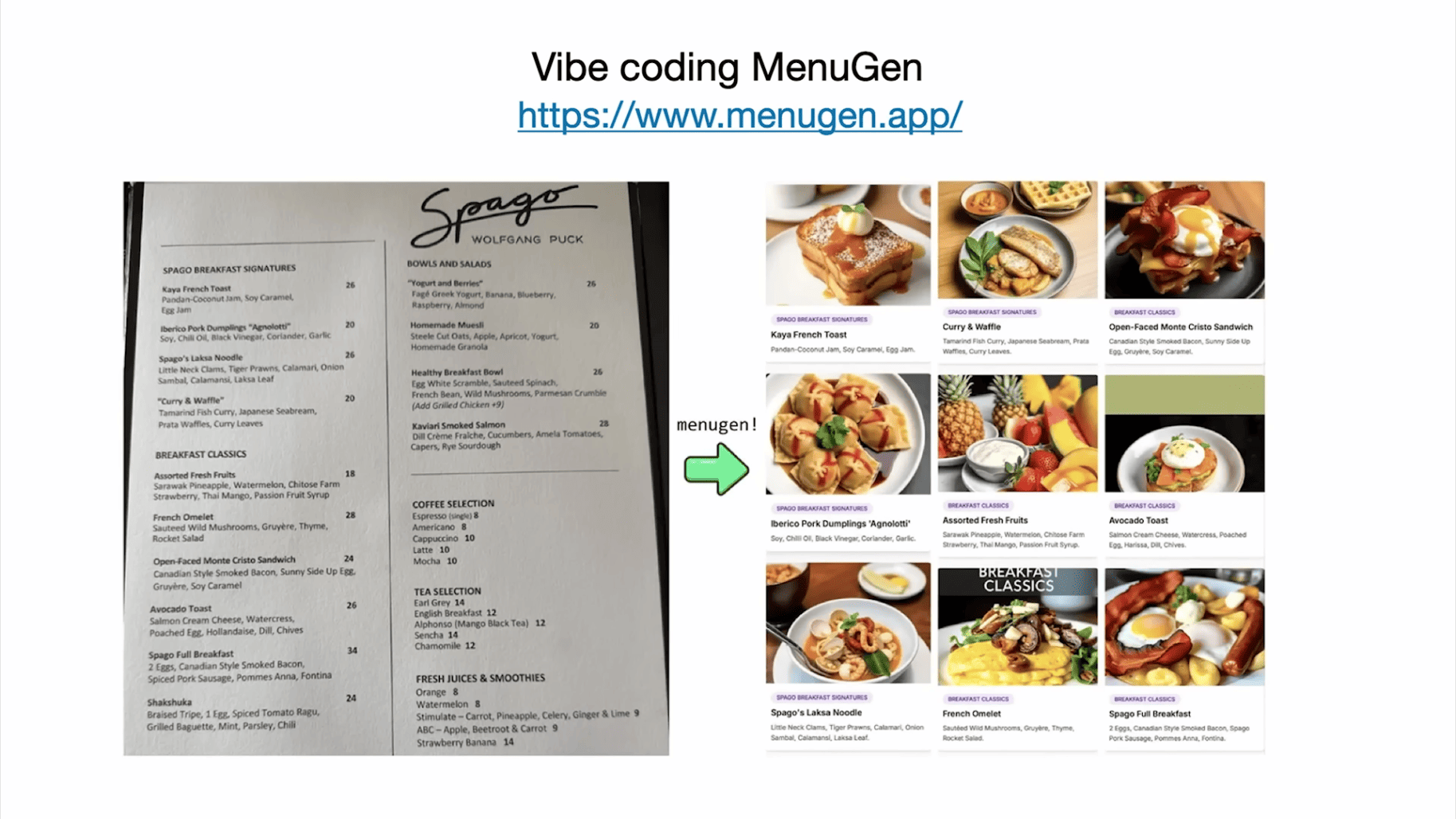

I also vibe-coded this app called Menu Gen. And this is live. You can try it at menugen.app. And I basically had this problem where I show up at a restaurant, I read through the menu, and I have no idea what any of the things are. And I need pictures. So this doesn't exist. So I was like, "Hey, I'm going to vibe code it."

So this is what it looks like. You go to menugen.app, and you take a picture of a menu, and then Menu generates the images, and everyone gets $5 in credits for free when you sign up. And therefore, this is a major cost center in my life. So this is a negative revenue app for me right now. I've lost a huge amount of money on MenuGen. Okay.

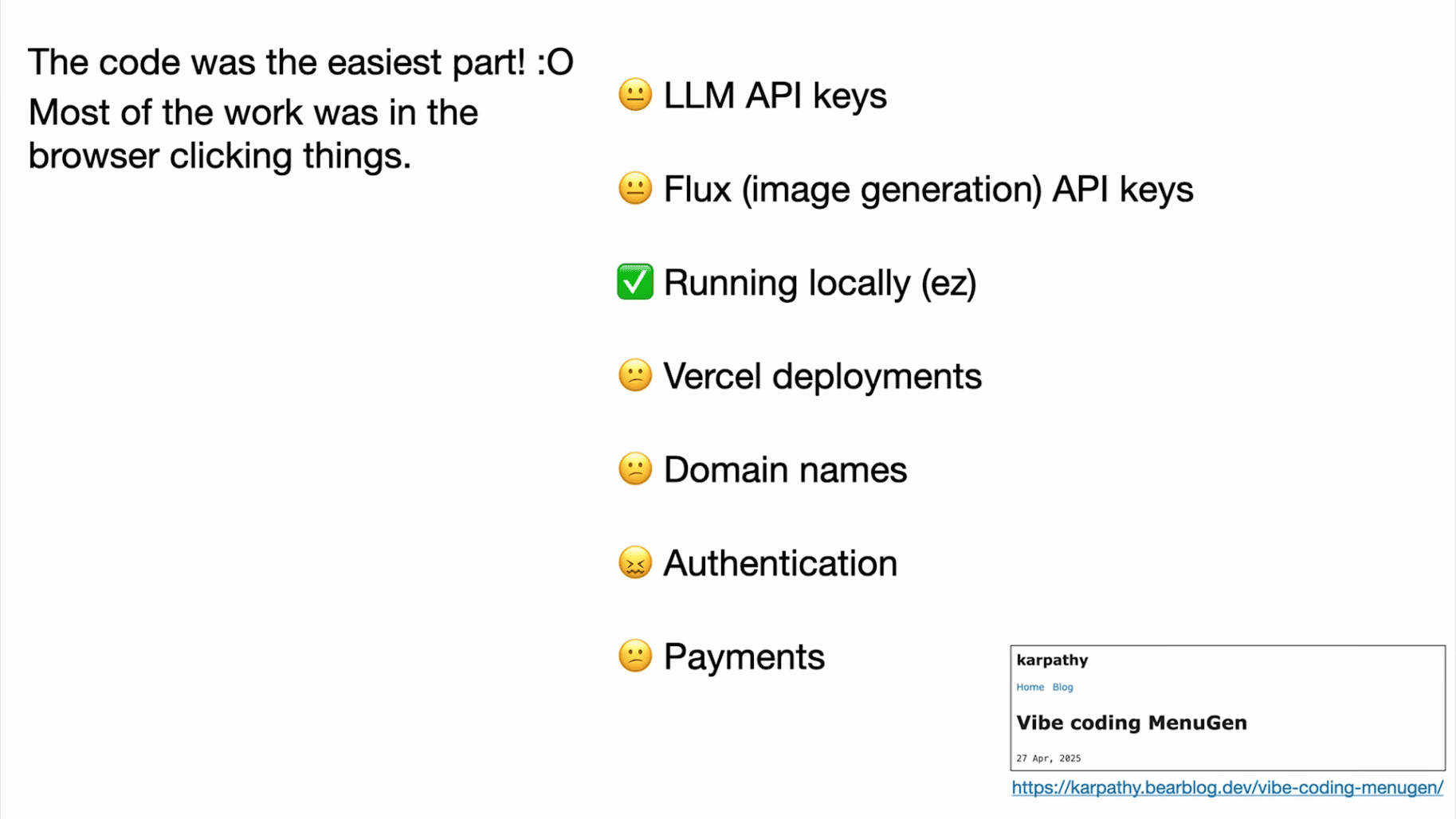

But the fascinating thing about MenuGen for me is that vibe coding part — the code was actually the easy part of vibe coding MenuGen, and most of it actually was when I tried to make it real so that you can actually have authentication and payments and the domain name and Vercel deployment. This was really hard, and all of this was not code. All of this DevOps stuff was me in the browser clicking stuff, and this was extremely slow and took another week.

So it was really fascinating that I had the MenuGen basically demo working on my laptop in a few hours, and then it took me a week because I was trying to make it real, and the reason for this is this was just really annoying.

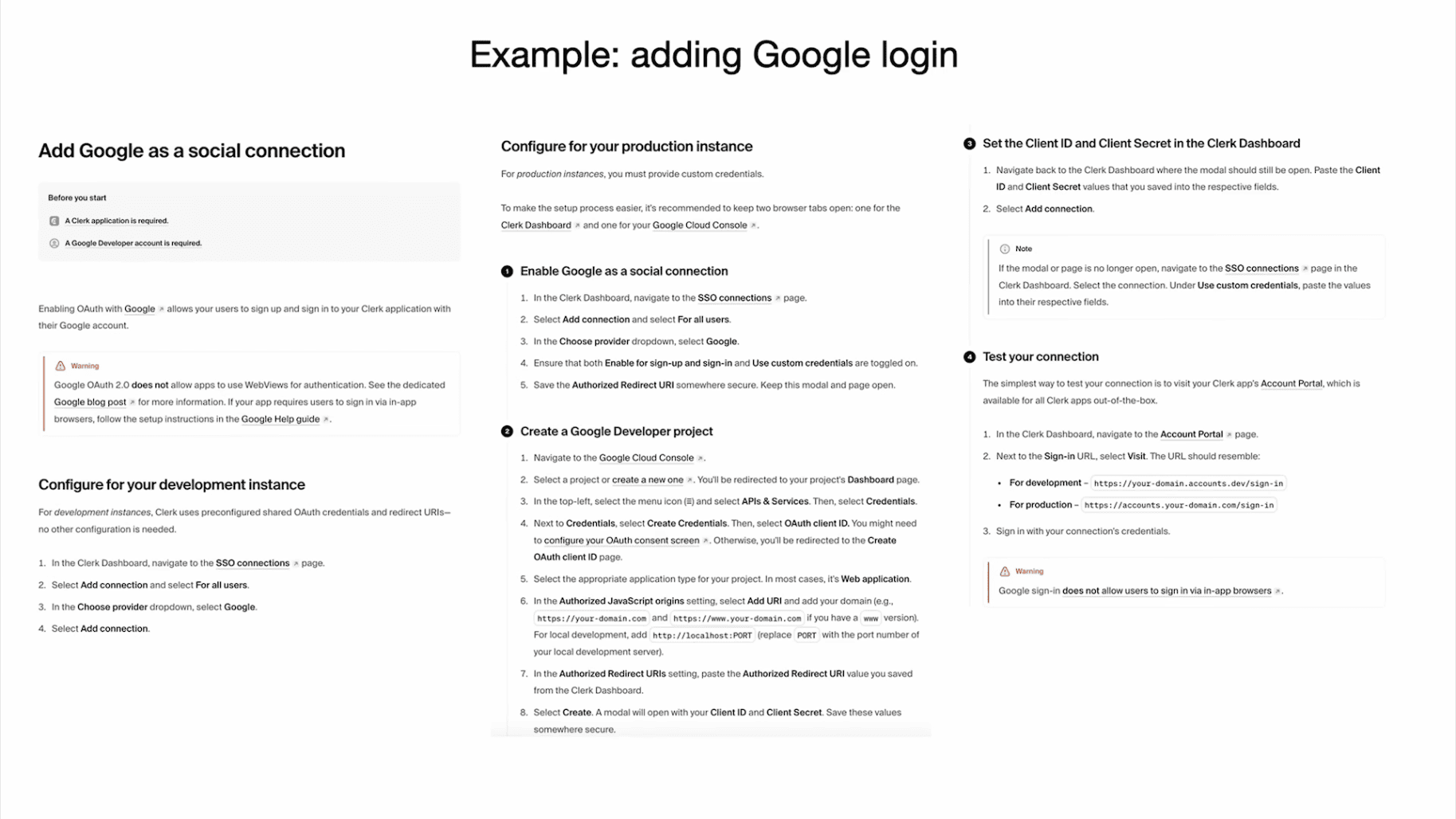

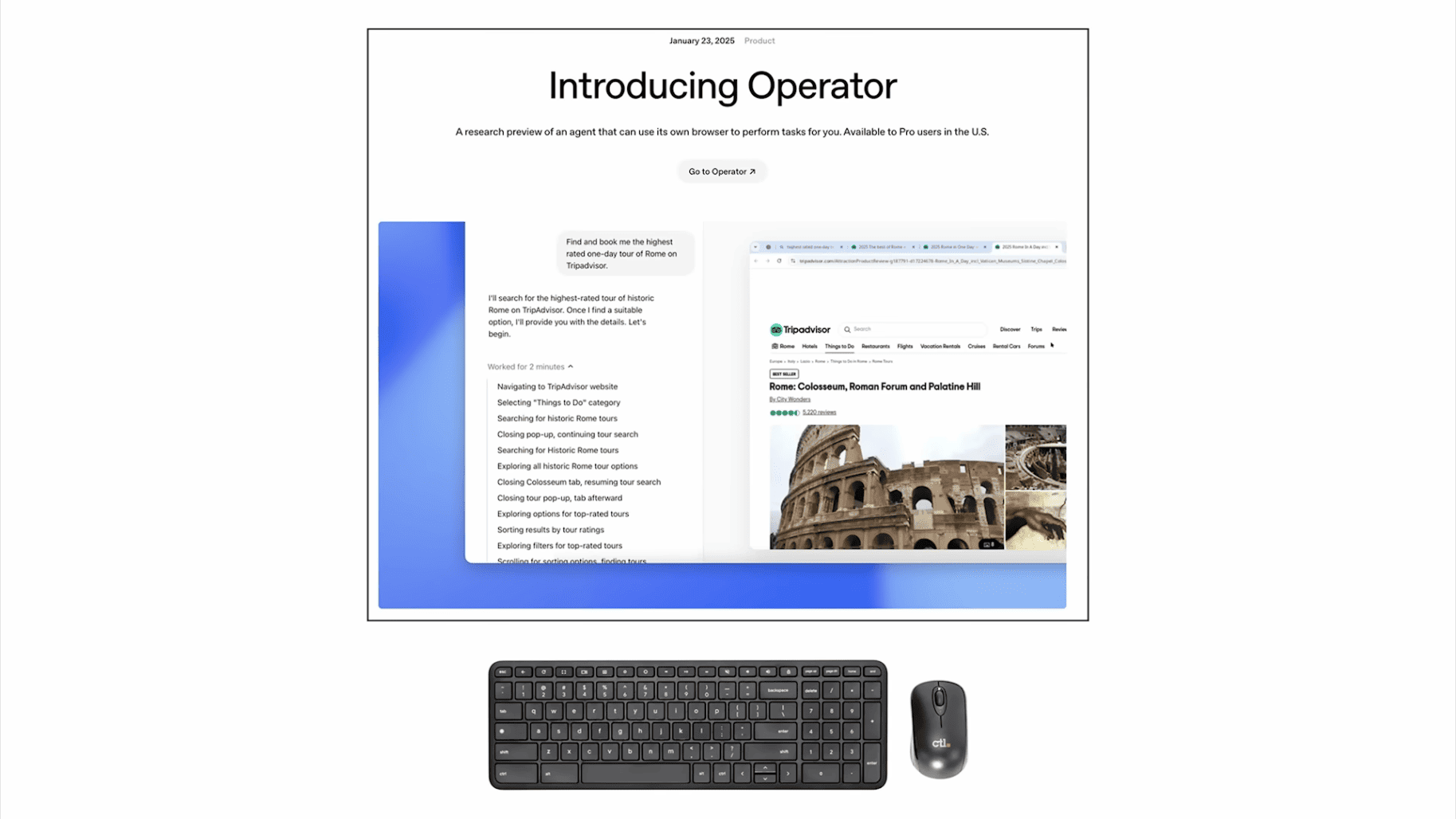

So for example, if you try to add Google Login to your web page, I know this is very small, but just a huge amount of instructions of this Clerk library telling me how to integrate this. And this is crazy. It's telling me go to this URL, click on this dropdown, choose this, go to this, and click on that. And it's telling me what to do. A computer is telling me the actions I should be taking. You do it. Why am I doing this? What the hell? I had to follow all these instructions. This was crazy.

So I think the last part of my talk therefore focuses on: can we just build for agents? I don't want to do this work. Can agents do this?

So roughly speaking, I think there's a new category of consumer and manipulator of digital information. It used to be just humans through GUIs or computers through APIs. And now we have a completely new thing — agents. Are they're computers, but they are human-like, right? They're people spirits. There are people spirits on the internet, and they need to interact with our software infrastructure. Can we build for them? It's a new thing.

There is new category of consumer/manipulator of digital information:

1Humans (GUIs)

2Computers (APIs)

3NEW: Agents <- computers... but human-like

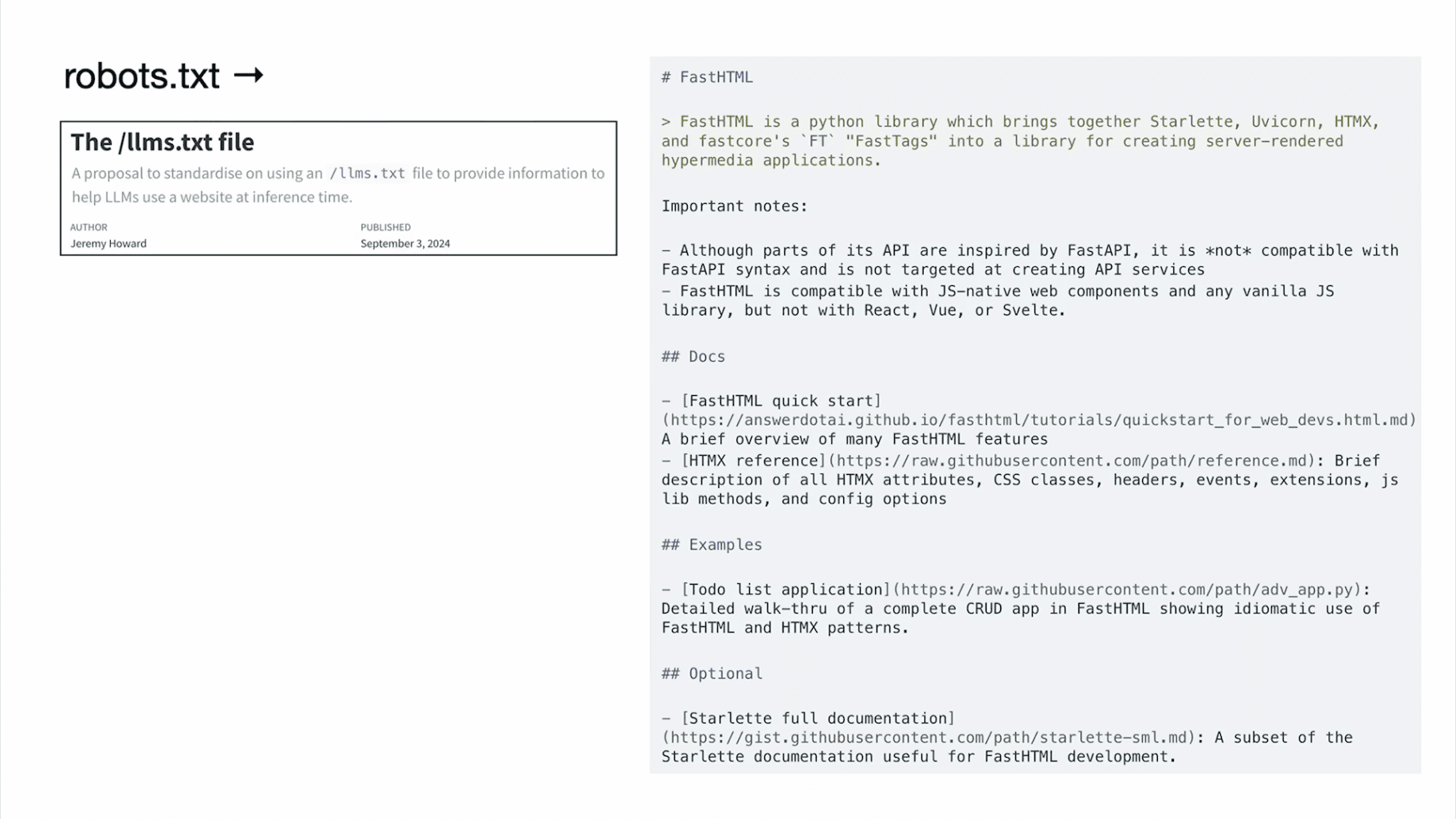

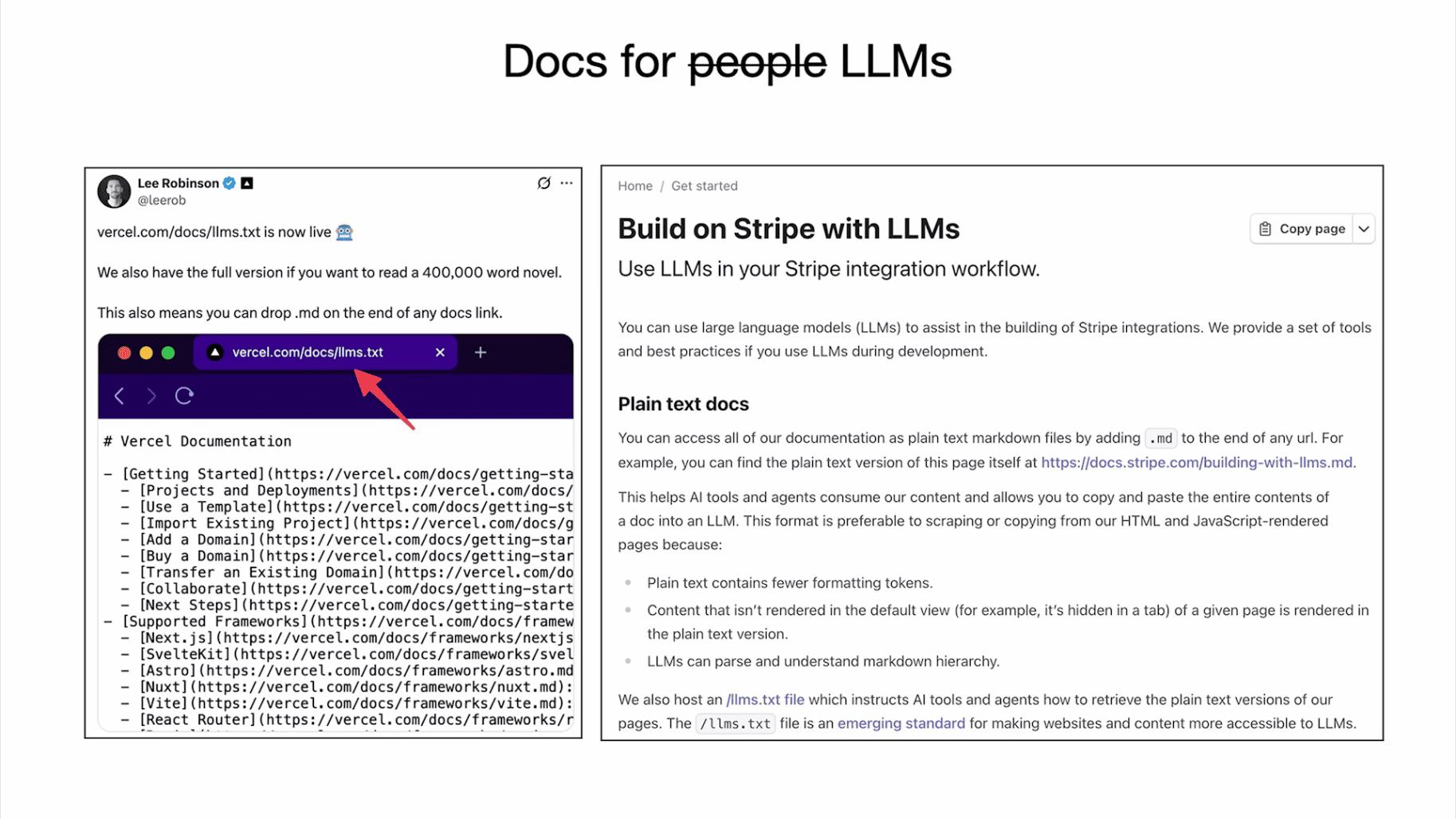

So as an example, you can have robots.txt on your domain and you can instruct or advise web crawlers on how to behave on your website. In the same way, you can have maybe llms.txt file which is just a simple markdown that's telling LLMs what this domain is about, and this is very readable to an LLM. If it had to instead get the HTML of your web page and try to parse it, this is very error-prone and difficult and will screw it up and it's not going to work. So we can just directly speak to the LLM. It's worth it.

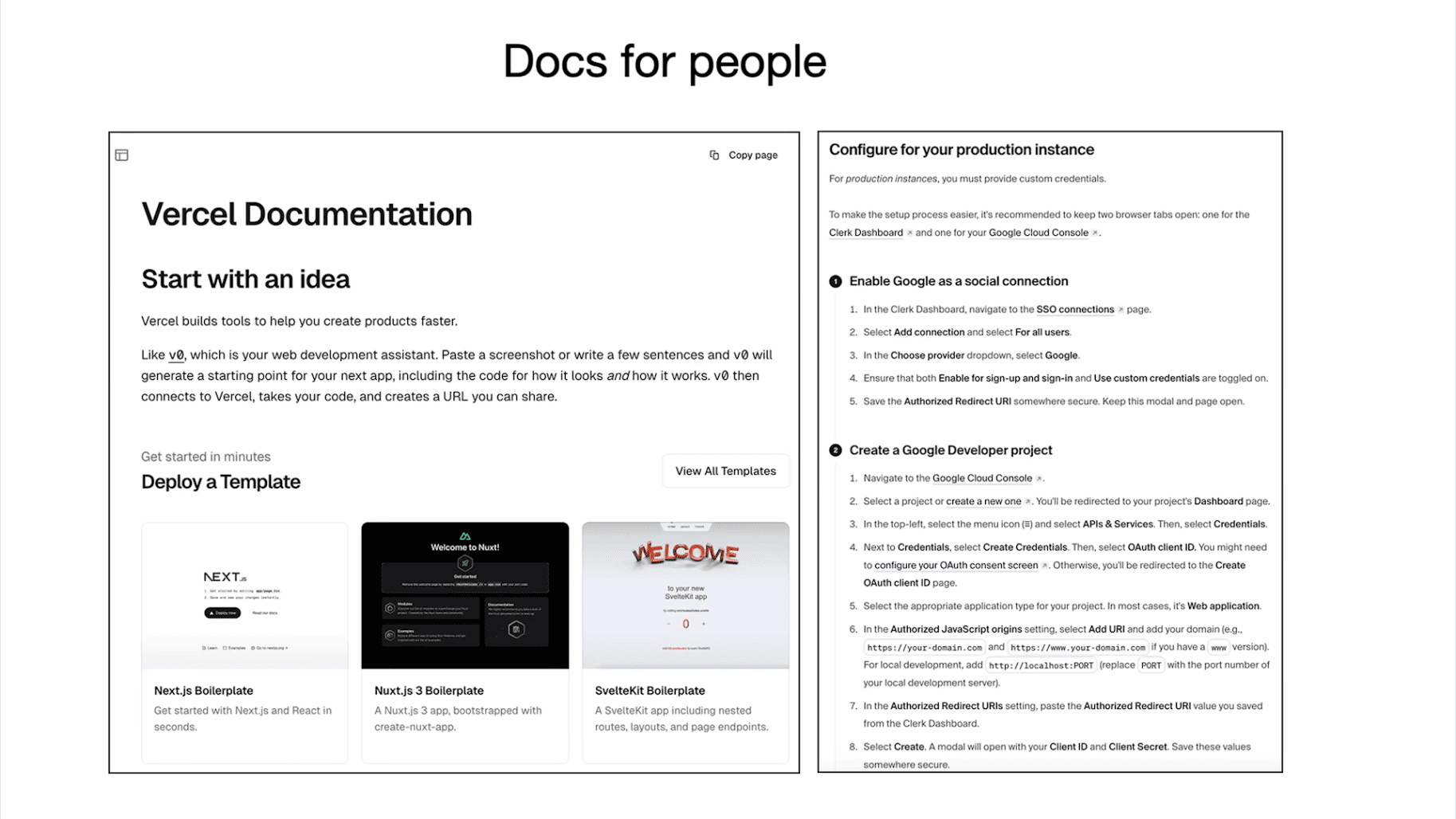

A huge amount of documentation is currently written for people. So you will see things like lists and bold and pictures, and this is not directly accessible by an LLM.

So I see some of the services now are transitioning a lot of their docs to be specifically for LLMs. So Vercel and Stripe as an example are early movers here, but there are a few more that I've seen already, and they offer their documentation in Markdown. Markdown is super easy for LLMs to understand. This is great.

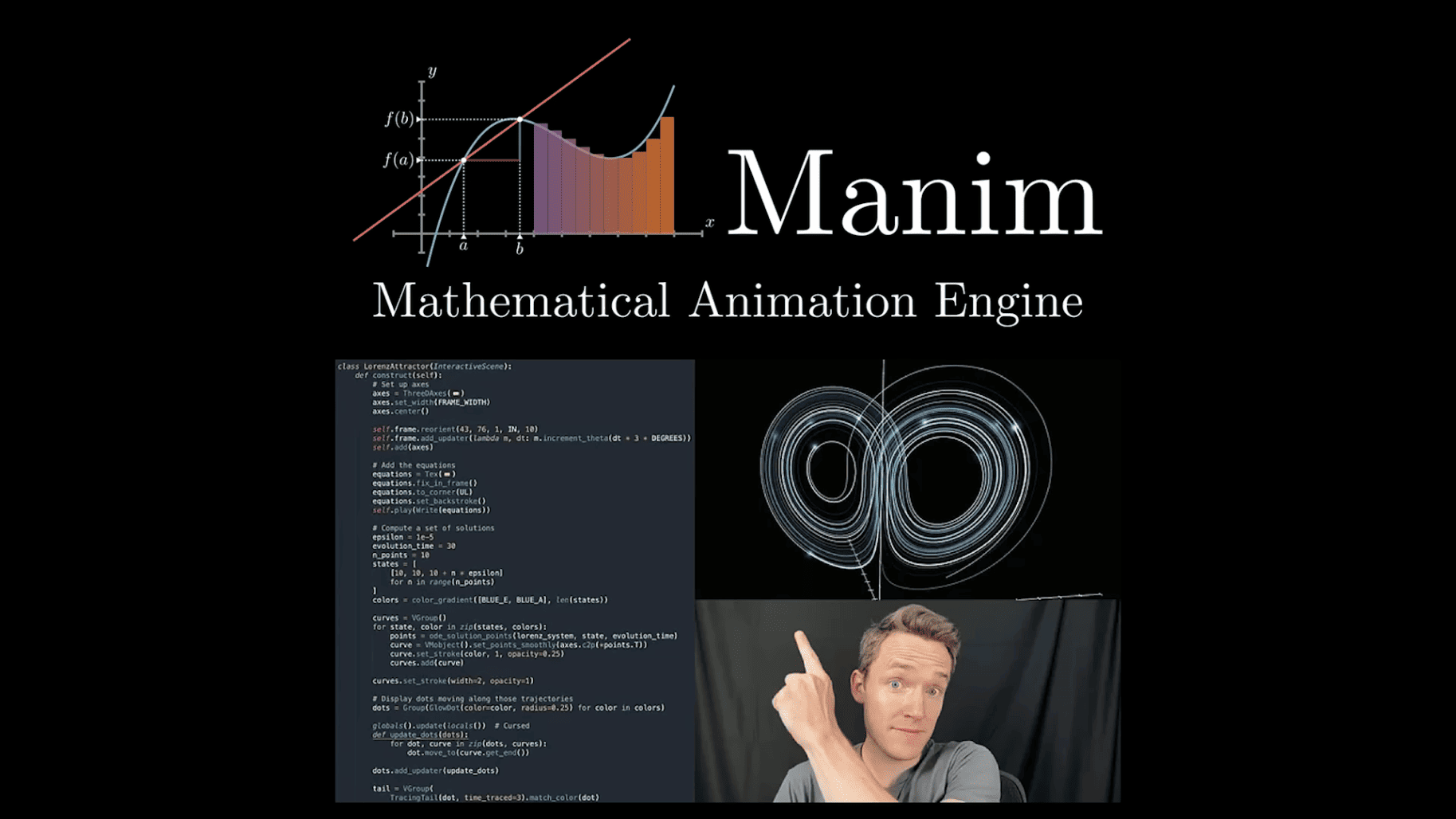

Maybe one simple example from my experience as well. Maybe some of you know 3Blue1Brown. He makes beautiful animation videos on YouTube. I love this library. So that he wrote Manim, and I wanted to make my own, and there's extensive documentation on how to use Manim, and so I didn't want to actually read through it. So I copy-pasted the whole thing to an LLM and I described what I wanted, and it just worked out of the box. The LLM just vibe-coded me an animation exactly what I wanted, and I was like, "Wow, this is amazing."

So if we can make docs legible to LLMs, it's going to unlock a huge amount of use, and I think this is wonderful and should happen more.

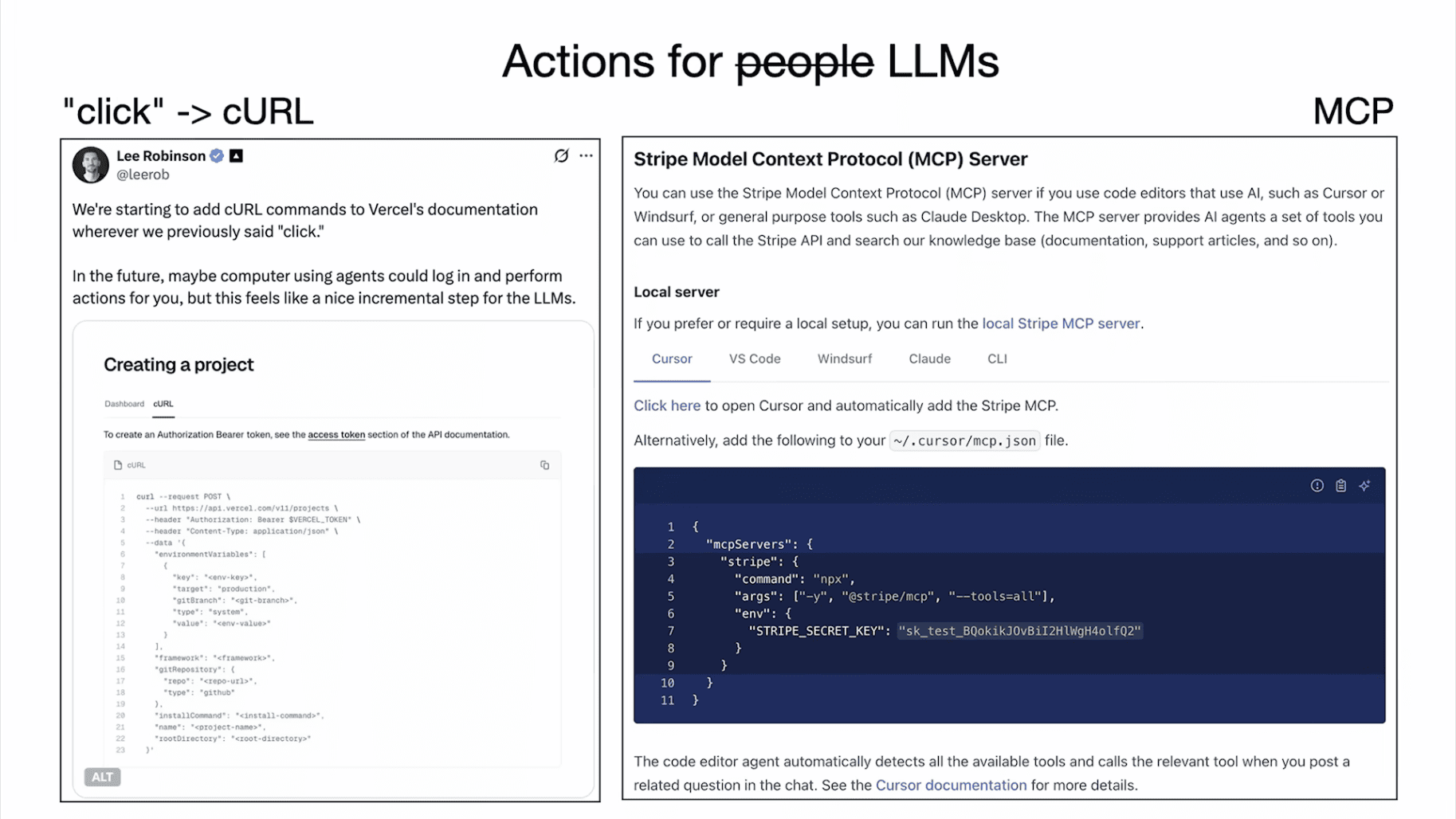

The other thing I wanted to point out is that you do unfortunately have to — it's not just about taking your docs and making them appear in markdown. That's the easy part. We actually have to change the docs because anytime your docs say "click," this is bad. An LLM will not be able to natively take this action right now.

So, Vercel, for example, is replacing every occurrence of "click" with an equivalent curl command that your LLM agent could take on your behalf. And so I think this is very interesting.

And then, of course, there's the Model Context Protocol from Anthropic. And this is also another way — it's a protocol of speaking directly to agents as this new consumer and manipulator of digital information. So I'm very bullish on these ideas.

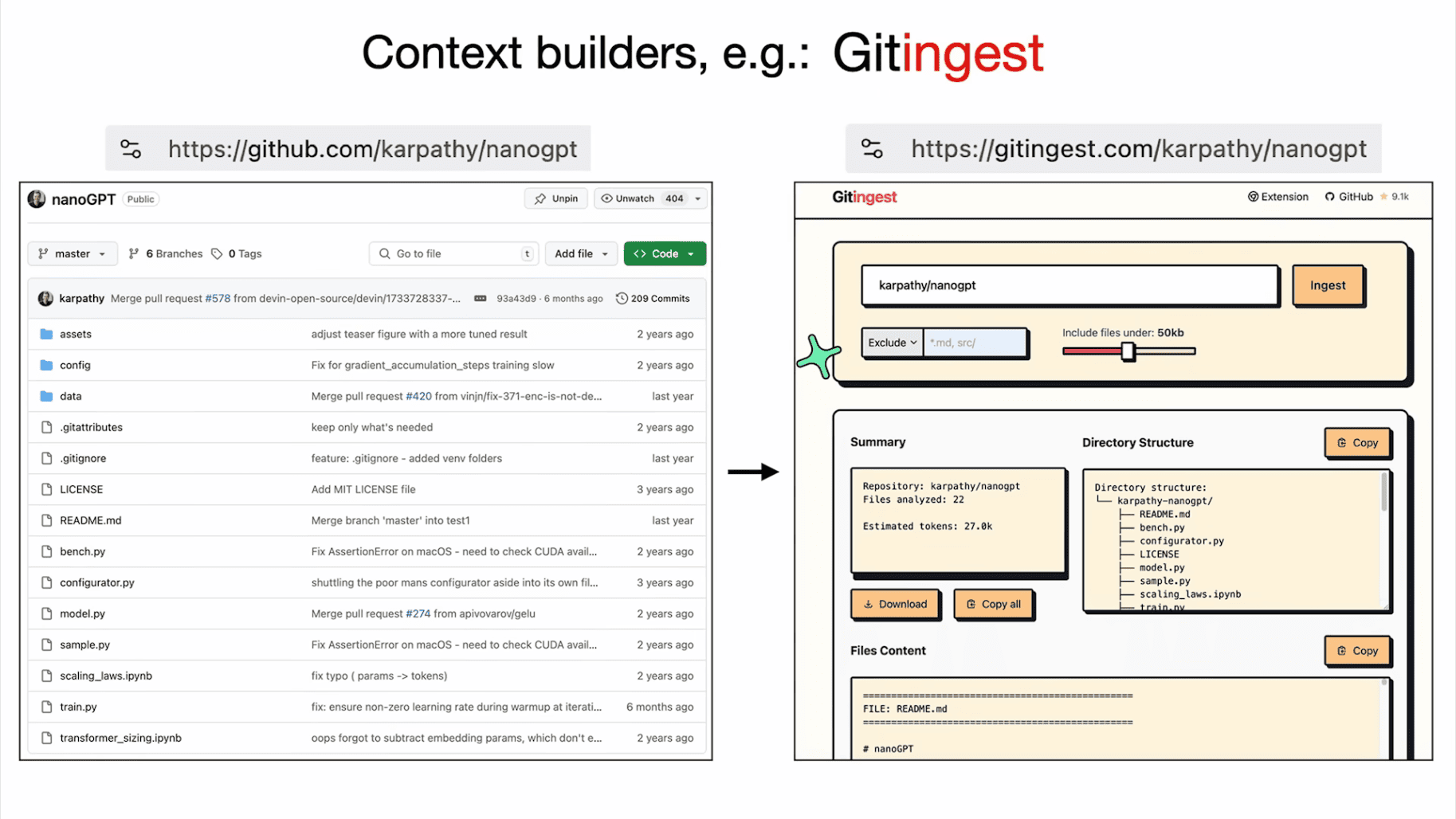

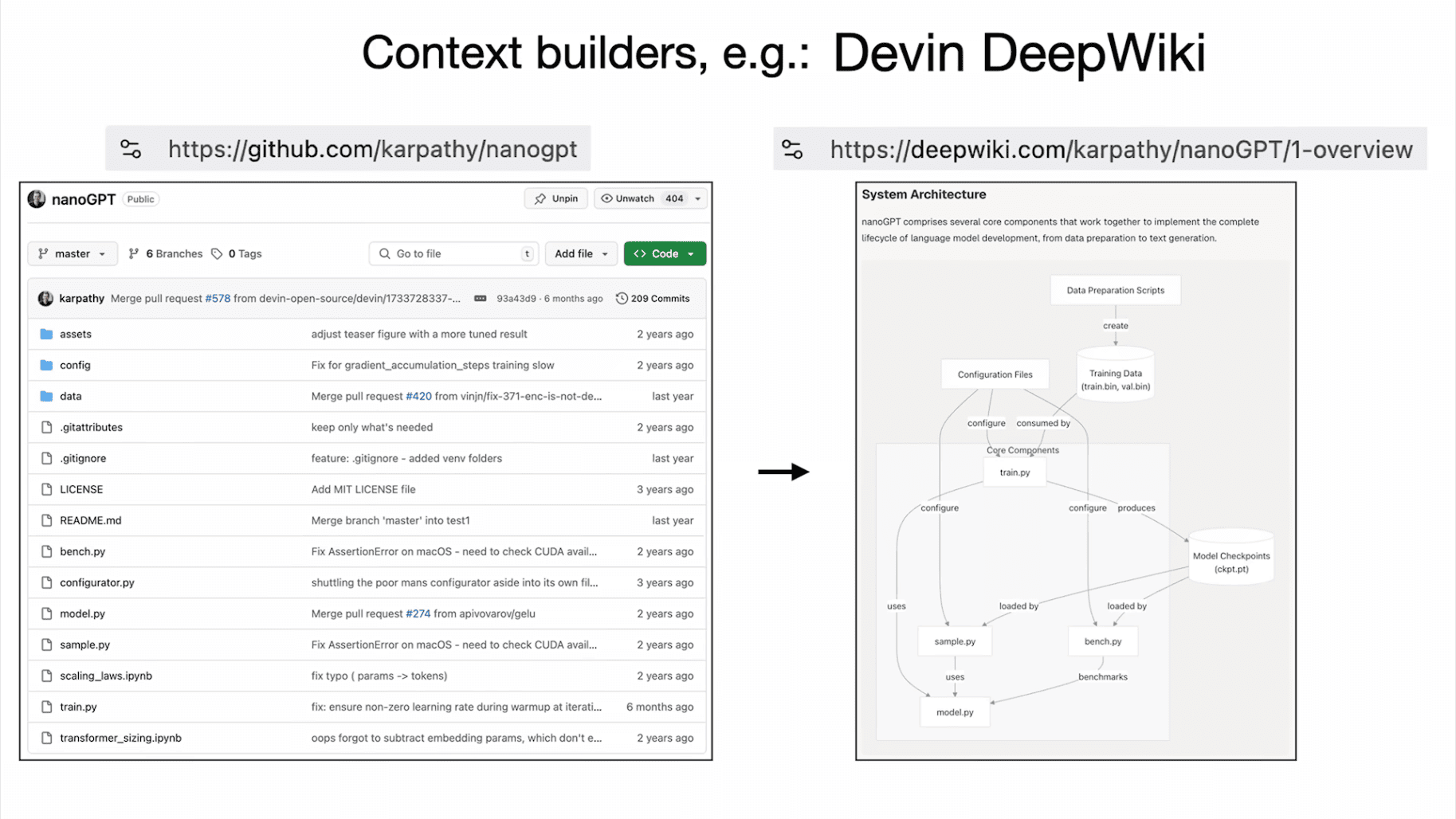

The other thing I really like is a number of little tools here and there that are helping ingest data in very LLM-friendly formats. So for example, when I go to a GitHub repo like my nanoGPT repo, I can't feed this to an LLM and ask questions about it because it's a human interface on GitHub.

So when you just change the URL from github.com to gitingest.com, then this will actually concatenate all the files into a single giant text and it will create a directory structure, etc. And this is ready to be copy-pasted into your favorite LLM and you can do stuff.

Maybe even more dramatic example of this is DeepWiki where it's not just the raw content of these files. This is from Devin, but also they have Devin basically do analysis of the GitHub repo, and Devin basically builds up a whole docs pages just for your repo, and you can imagine that this is even more helpful to copy-paste into your LLM.

So I love all the little tools that basically where you just change the URL and it makes something accessible to an LLM. So this is all well and great, and I think there should be a lot more of it.

One more note I wanted to make is that it is absolutely possible that in the future — this is not even future, this is today — LLMs will be able to go around and they'll be able to click stuff and so on, but I still think it's very worth basically meeting LLMs halfway and making it easier for them to access all this information because this is still fairly expensive to use and a lot more difficult.

And so I do think that lots of software — there will be a long tail where it won't adapt apps because these are not live player sort of repositories or digital infrastructure, and we will need these tools. But I think for everyone else, I think it's very worth meeting in some middle point. So I'm bullish on both if that makes sense.

So in summary, what an amazing time to get into the industry. We need to rewrite a ton of code. A ton of code will be written by professionals and by coders. These LLMs are kind of like utilities, kind of like fabs, but they're especially like operating systems. But it's so early. It's like 1960s of operating systems, and I think a lot of the analogies cross over.

And these LLMs are like these fallible people spirits that we have to learn to work with. And in order to do that properly, we need to adjust our infrastructure towards it. So when you're building these LLM apps, I describe some of the ways of working effectively with these LLMs and some of the tools that make that possible and how you can spin this loop very, very quickly and basically create partial autonomy products and then a lot of code has to also be written for the agents more directly.

But in any case, going back to the Iron Man suit analogy, I think what we'll see over the next decade roughly is we're going to take the slider from left to right. And I'm very interested. It's going to be very interesting to see what that looks like. And I can't wait to build it with all of you. Thank you.